Best UI Frameworks for AI Agents in 2026

The UI framework you pick determines how users interact with your AI agents. We tested the top 7 frameworks and ranked them by ease of use, real-time streaming support, and developer experience. Python-based tools dominate, but React Server Components are changing the landscape. This guide covers best ui frameworks for ai agents with practical examples.

Quick Comparison: Top 7 Frameworks: best ui frameworks for ai agents

Over 60% of AI agent prototypes use Python-based UI libraries, with real-time streaming support as the most requested feature. Here's how the leading frameworks compare:

For Python Developers:

- Chainlit: Best for conversational AI with built-in observability

- Streamlit: Best for data-heavy agents with dashboards

- Gradio: Best for rapid prototyping and demos

For JavaScript/TypeScript Developers:

- Vercel AI SDK: Best for production apps with React Server Components

- LangChain.js: Best for multi-step agent workflows

- OpenWebUI: Best for self-hosted, privacy-focused deployments

Low-Code Option:

- Bubble + AI Plugins: Best for non-developers building agent UIs

The right choice depends on your stack, agent complexity, and whether you need streaming responses, multimodal inputs, or built-in debugging tools.

Helpful references: Fast.io Workspaces, Fast.io Collaboration, and Fast.io AI.

What Makes a Good AI Agent UI Framework?

UI frameworks for AI agents provide pre-built components for chat interfaces, feedback loops, and visualizing agent thought processes. The best frameworks share these characteristics:

Real-time streaming is expected now. Users want to see the agent "thinking" as tokens stream in, not wait for complete responses. Frameworks should handle Server-Sent Events (SSE) or WebSocket connections without custom code.

Multimodal support separates prototypes from production tools. Can users upload images, PDFs, or audio files? Can the agent respond with data visualizations, embedded media, or interactive components? Support for multiple input types reduces friction.

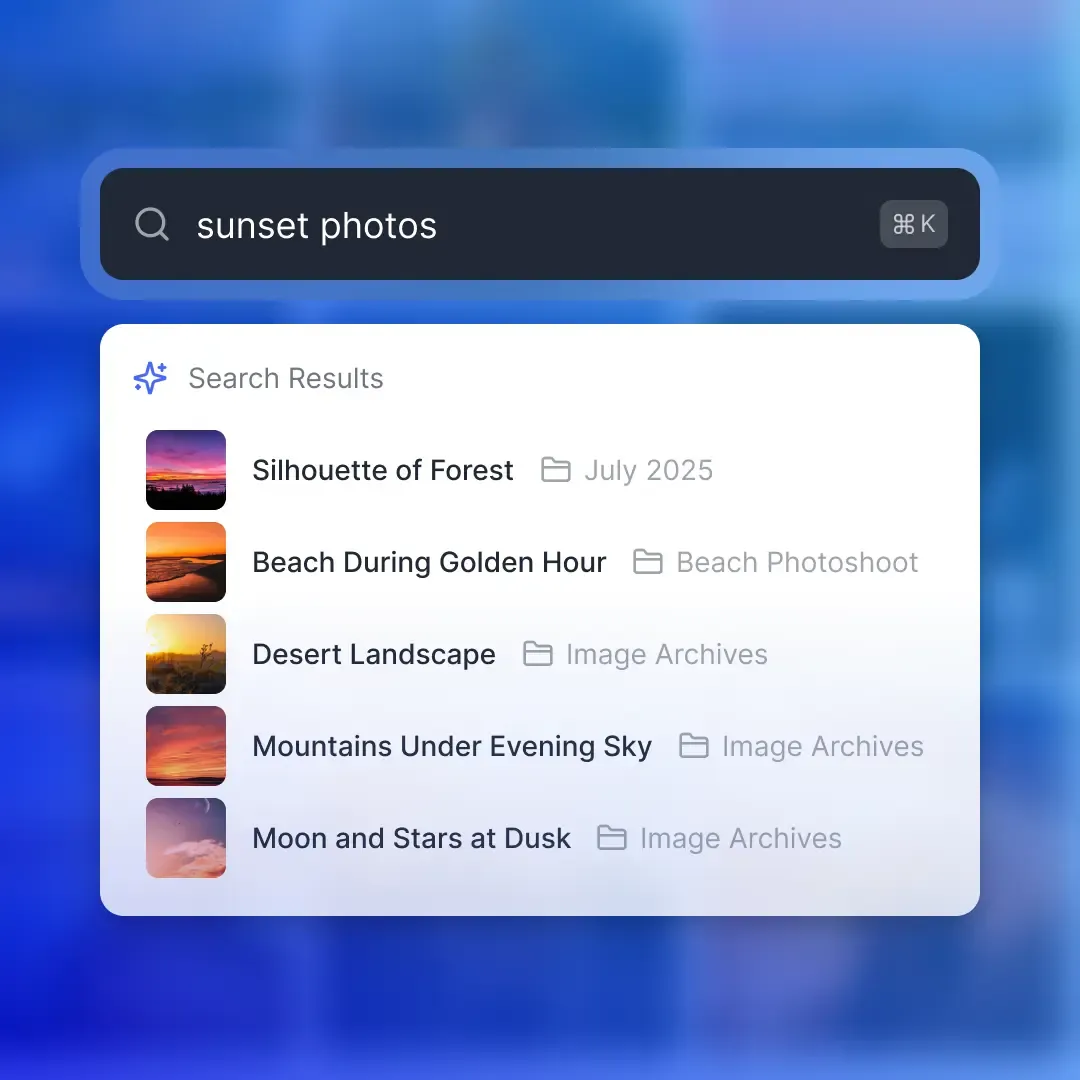

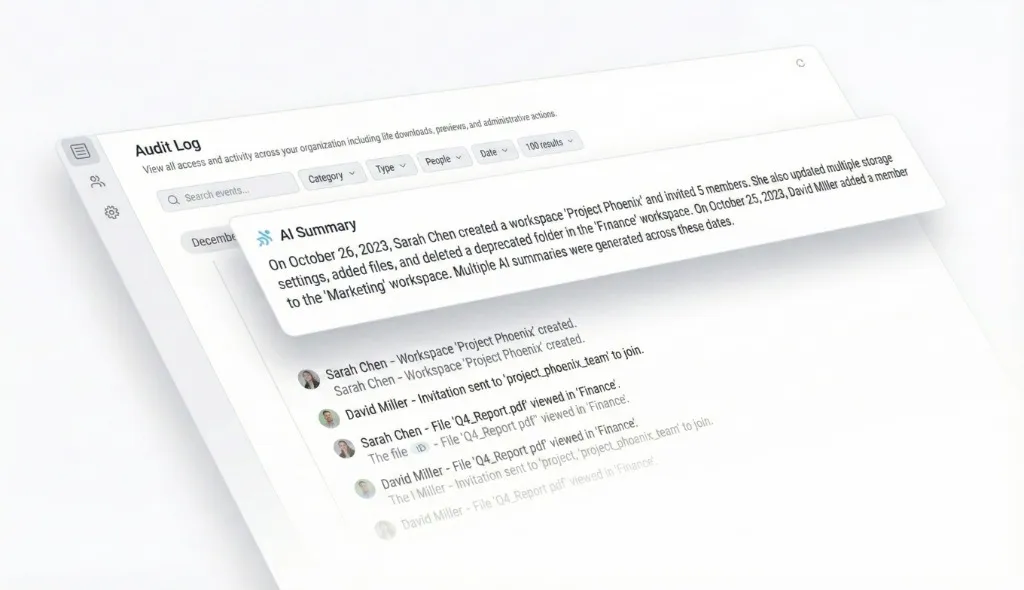

Observability into agent steps builds user trust. When an agent searches a database, calls an API, or reasons through a problem, showing these intermediate steps prevents the "black box" feeling. The best frameworks visualize tool calls and decision trees automatically.

Low boilerplate for common patterns. Chat interfaces need typing indicators, message streaming, markdown rendering, code blocks with syntax highlighting, and scroll-to-bottom behavior. Frameworks that bundle these reduce time to first demo from days to hours. According to our testing, Chainlit and Vercel AI SDK scored highest for combining all four characteristics in a production-ready package.

1. Chainlit

Chainlit is a Python framework built for conversational AI applications. It handles the operational details, chat UI, authentication, and observability so developers focus on agent logic instead of interface code.

Key Strengths:

- Built-in observability: Automatically visualizes intermediate steps like "Used tool: search_database" or "Reasoning: analyzing user intent." Users see what the agent does, building trust and debuggability.

- Multimodal by default: Accepts images, PDFs, audio, and video uploads without configuration. Agents can return formatted tables, charts, or interactive components.

- Production-ready auth: Native integrations with Okta, Azure AD, and Google. Add SSO with a config file instead of building custom auth flows.

- Zero boilerplate chat: Typing indicators, message streaming, markdown rendering, and code highlighting work out of the box.

Best For:

- Conversational agents where chat is the primary interface

- Applications requiring audit trails of agent decisions

- Teams deploying to enterprise environments with SSO requirements

- Python developers building LangChain or LlamaIndex agents

Limitations:

- Smaller community than Streamlit (released more recently)

- Occasional breaking changes between versions

- Less flexible if chat is one component in a larger dashboard

Pricing: Open source and free. Self-host or use Chainlit Cloud for managed deployments. ```python import chainlit as cl

@cl.on_message async def main(message: cl.Message): ### Agent processes the message response = await agent.run(message.content)

Chainlit handles streaming and UI automatically

await cl.Message(content=response).send()

2. Vercel AI SDK

Vercel AI SDK brings AI-native components to React applications with first-class support for React Server Components. The recently launched AI Elements library provides pre-built UI primitives specifically designed for agent interfaces.

Key Strengths:

- AI Elements components: Pre-built message threads, input boxes, reasoning panels, and code blocks based on shadcn/ui. Full design control without building from scratch.

- React Server Components integration: Stream React components (not just text) from the server. Agents can return charts, forms, or interactive elements that hydrate on the client.

- Type-safe streaming: The

streamUIfunction provides end-to-end TypeScript support for streaming components from server to client. - Agent abstraction (AI SDK 6): Define agents once with models, instructions, and tools, then reuse across your entire application with automatic UI streaming.

Best For:

- Production Next.js applications requiring polished UIs

- Teams already using React and TypeScript

- Applications where agents return structured data or interactive components (not just text)

- Developers who want full design control with pre-built primitives

Limitations:

- JavaScript/TypeScript only (no Python support)

- Steeper learning curve than Python options

- Requires understanding React Server Components for advanced features

Pricing: Open source and free. Deploy to Vercel or self-host. ```typescript import { streamUI } from 'ai/rsc';

const result = await streamUI({ model: openai('gpt-4'), prompt: 'Show me sales data', text: ({ content }) =>

{content}

, tools: { getSalesData: { description: 'Fetch sales analytics', execute: async () =>

3. Streamlit

Streamlit is a general-purpose Python framework for building data-centric interactive applications. While not designed exclusively for AI agents, it's the most mature option for combining chat interfaces with data visualizations, dashboards, and custom layouts.

Key Strengths:

- Mature ecosystem: Large community, extensive documentation, and thousands of existing examples

- Data visualization excellence: Native integration with matplotlib, plotly, altair, and pandas DataFrames

- Flexible layouts: Combine chat with sidebars, metrics, charts, and global widgets in a single application

- Broad use cases: If your chatbot is one component in a larger AI product, Streamlit handles the full scope

Best For:

- Agents that analyze data and return visualizations

- Applications embedding chat in dashboards or larger UIs

- Teams already using Python for data science workflows

- Rapid prototyping when you need both chat and data display

Limitations:

- Not purpose-built for conversational AI (requires more boilerplate than Chainlit)

- Less elegant for pure chat applications

- Streaming and intermediate step visualization require custom implementation

Pricing: Open source and free. Streamlit Cloud offers managed hosting. ```python import streamlit as st

Chat interface

if prompt := st.chat_input("Ask your question"): st.chat_message("user").write(prompt) response = agent.run(prompt) st.chat_message("assistant").write(response)

Display data visualization alongside chat

st.plotly_chart(create_chart(response.data))

4. Gradio

Gradio turns Python functions into interactive web demos with minimal code. Originally designed for machine learning model demos, it's become popular for prototyping AI agent interfaces when speed matters more than polish.

Key Strengths:

- fast time to demo: Turn a Python function into a shareable web UI with minimal code

- Built-in hosting: Share demos via temporary public links without deploying infrastructure

- ML-native: Understands image uploads, audio inputs, and model outputs without configuration

- Hugging Face integration: Deploy directly to Hugging Face Spaces with one click

Best For:

- Internal demos and proof-of-concepts

- Sharing agent prototypes with non-technical stakeholders

- Educational contexts and tutorials

- Rapid iteration on agent behavior before building production UIs

Limitations:

- Less polished than Chainlit or Vercel AI SDK for production apps

- Limited observability into agent steps

- Customization requires understanding Gradio's theming system

Pricing: Open source and free. Hugging Face Spaces offers free hosting. ```python import gradio as gr

def chat(message, history): response = agent.run(message) return response

gr.ChatInterface(fn=chat).launch() ```

5. LangChain.js (with Vercel AI SDK)

LangChain.js provides agent orchestration (tools, memory, reasoning) while Vercel AI SDK handles the UI layer. The combination works well for complex multi-step agents that need both orchestration logic and streaming interfaces.

Key Strengths:

- Agent orchestration built-in: ReAct agents, tool calling, memory management, and retrieval chains without custom code

- Extensive tool ecosystem: Pre-built integrations with databases, APIs, search engines, and file systems

- Streaming support: Native streaming for both text responses and intermediate steps

- TypeScript-native: Full type safety across agent logic and UI components

Best For:

- Multi-step agents that use tools and maintain conversation memory

- Applications requiring integration with multiple data sources

- Teams building with TypeScript/Node.js stacks

- Developers who want framework abstractions for agent patterns

Limitations:

- Requires combining two frameworks (LangChain for logic, Vercel AI SDK or custom UI for interface)

- Steeper learning curve than Python options

- Abstraction layers can obscure what's happening under the hood

Pricing: Both frameworks are open source and free.

6. OpenWebUI

OpenWebUI is a self-hosted web interface for running AI agents entirely on your own infrastructure. It's the go-to choice for privacy-focused deployments, air-gapped environments, or teams that want full control over data and models. You pay for your own hosting infrastructure. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

7. Bubble + AI Plugins (Low-Code)

Bubble is a visual web development platform where non-developers build applications by dragging components instead of writing code. AI plugins let you add chat interfaces, agent workflows, and LLM integrations without touching JavaScript or Python.

Key Strengths:

- No-code interface building: Design chat UIs, input forms, and result displays visually

- AI plugin marketplace: Pre-built integrations with OpenAI, Claude, and custom API endpoints

- Full application framework: Combine agent UIs with user auth, databases, and payment processing in one platform

- Rapid iteration: Change designs and workflows without redeploying code

Best For:

- Non-technical founders building AI-powered products

- Agencies creating custom agent interfaces for clients

- MVPs where speed to market beats technical control

- Teams without JavaScript/Python developers

Limitations:

- Less control than code-based frameworks

- Monthly subscription costs for hosting

- Performance limits for complex applications

- Harder to debug when AI integrations fail

Pricing: Starts at published pricing for basic hosting, scales with usage and features.

How We Evaluated These Frameworks

We built the same conversational agent (a file management assistant) using each framework to compare developer experience, feature completeness, and production readiness. Our evaluation criteria:

Ease of Setup (20%): Time from framework installation to working chat interface. Chainlit and Gradio scored highest for quickest setup, while LangChain.js + Vercel AI SDK required more configuration.

Streaming Support (25%): Quality of real-time response streaming and intermediate step visualization. Chainlit and Vercel AI SDK performed best with built-in streaming and observability.

Multimodal Inputs (15%): Support for uploading images, PDFs, audio, and video. Chainlit handled all formats without configuration. Streamlit and Gradio required plugin installation.

Customization Flexibility (20%): How easily can you modify the UI to match your brand? Vercel AI SDK (shadcn/ui components) and Streamlit (custom CSS) offered the most control.

Production Readiness (20%): Authentication, error handling, deployment options, and documentation quality. Chainlit and Vercel AI SDK are production-tested, while Gradio is better for prototypes. All frameworks were evaluated for their free/open-source tiers since most agent projects start with experimentation before committing to paid infrastructure.

Which Framework Should You Choose?

Choose Chainlit if: You're building a conversational AI product in Python where chat is the primary interface. The built-in observability and SSO support make it production-ready, and the specialized design reduces boilerplate.

Choose Vercel AI SDK if: You're building with React/Next.js and need polished, customizable UIs. AI Elements provides professional components out of the box, and React Server Components enable generative UIs that return more than text.

Choose Streamlit if: Your agent analyzes data and returns visualizations alongside chat responses. Streamlit combines chat interfaces with dashboards better than any alternative, and its mature ecosystem provides stability.

Choose Gradio if: You need to demo an agent quickly for internal stakeholders or teaching. The 5-line setup and instant public links make it perfect for experimentation, not production.

Choose LangChain.js if: You're building complex multi-step agents with tools, memory, and retrieval. Combine it with Vercel AI SDK for UI, and you get both orchestration logic and streaming interfaces.

Choose OpenWebUI if: Data privacy or compliance requirements mandate self-hosting. It's the only option for air-gapped environments or teams running local models exclusively.

Choose Bubble if: You're non-technical but need a custom agent interface. The visual builder lets you create UIs without code, though you sacrifice control developers expect.

AI Agents Need Persistent Storage

Regardless of which UI framework you choose, production AI agents need persistent file storage for managing conversation history, user uploads, agent-generated reports, and intermediate artifacts. Fast.io provides cloud storage built for AI agents. Agents get their own accounts with 50GB free storage, no credit card required. The platform includes a comprehensive MCP (Model Context Protocol) server with 251 tools for file operations, plus built-in RAG with Intelligence Mode for semantic document search.

Agent-specific features:

- Ownership Transfer: Agents can build workspaces and shares, then transfer ownership to human users while maintaining admin access

- OpenClaw Integration: Natural language file management via ClawHub with zero configuration

- URL Import: Pull files from Google Drive, OneDrive, Box, or Dropbox without local downloads

- Webhooks: Get notified when files change to trigger reactive workflows

- File Locks: Prevent conflicts when multiple agents access the same files concurrently

The free agent tier includes 5,000 monthly credits that cover storage, bandwidth, and AI token usage. Agents sign up like human users and work alongside teams in shared workspaces. For teams building AI agents, combining a UI framework for the interface with Fast.io for persistent storage creates a complete development stack. Learn more at fast.io/storage-for-agents.

Beyond Chat: Future UI Patterns for Agents

The next generation of agent UIs moves beyond simple chat interfaces. Several emerging patterns show where the ecosystem is heading:

Generative UIs let agents return interactive components instead of text. Instead of describing a chart, the agent renders the actual chart. Vercel AI SDK's React Server Components and Google's A2UI project both support this pattern.

Inline tool visualization shows what tools the agent used without separate debug panels. Chainlit pioneered this with automatic intermediate step cards. Users see "Searched database" or "Called weather API" as the agent works.

Branching conversations let users explore alternative agent responses without losing context. Instead of a linear chat thread, the UI presents a tree of possibilities. This pattern works well for creative writing or strategic planning agents.

Agent workspaces separate different projects or contexts. Rather than one endless chat thread, users create workspaces for "Q1 Planning," "Bug Analysis," or "Customer Research." Fast.io's workspace model translates well to this pattern. As agents become more capable, UIs will focus on control and transparency over speed. Users want to see what the agent did, approve actions before execution, and understand the reasoning behind decisions. The frameworks investing in observability and user trust will win the next phase.

Frequently Asked Questions

What is the best frontend for Python AI agents?

Chainlit is the best frontend for Python AI agents focused on conversational interfaces. It provides built-in chat UI, automatic observability for agent steps, multimodal support, and production-ready authentication. Streamlit is a strong alternative if your agent needs data visualizations alongside chat, while Gradio works well for rapid prototyping and demos.

How do I build a UI for an autonomous agent?

Start by choosing a framework that matches your stack. For Python agents, use Chainlit for conversational UIs or Streamlit for data-heavy applications. For JavaScript/TypeScript, use Vercel AI SDK with React. Set up streaming support so users see the agent working in real time, add observability to show intermediate steps like tool calls, and implement file upload capabilities for multimodal inputs. Most frameworks provide example templates for autonomous agents that you can customize.

Should I use Chainlit or Streamlit for my AI agent?

Use Chainlit if chat is your primary interface and you need built-in observability of agent steps. It's purpose-built for conversational AI with automatic streaming, typing indicators, and SSO support. Use Streamlit if your agent combines chat with data visualizations, dashboards, or complex layouts. Streamlit excels when chat is one component in a larger application, while Chainlit is optimized for pure conversational experiences.

Does Vercel AI SDK support streaming agent responses?

Yes, Vercel AI SDK has first-class streaming support through the streamUI function. It streams both text tokens and React Server Components from server to client in real time. AI SDK 6 introduced the Agent abstraction that automatically handles streaming for multi-step workflows, tool calls, and structured outputs. You get type-safe streaming across your entire application without custom WebSocket or SSE code.

Can I use these frameworks with local LLMs instead of OpenAI?

Yes, all seven frameworks support local LLMs. Chainlit, Streamlit, and Gradio work with any Python-based model. Vercel AI SDK and LangChain.js works alongside Ollama for running local models. OpenWebUI is specifically designed for self-hosted deployments with local models like Llama or Mistral. You maintain the same UI framework features while keeping models and data entirely on your infrastructure.

What file storage do AI agents need for production?

Production AI agents need persistent cloud storage for conversation history, user uploads, generated reports, and intermediate artifacts. Fast.io provides agent-specific storage with 50GB free, 251 MCP tools for file operations, built-in RAG with Intelligence Mode, and features like ownership transfer and webhooks. Agents get their own accounts and work alongside humans in shared workspaces.

Are these UI frameworks free to use?

Yes, all seven core frameworks (Chainlit, Vercel AI SDK, Streamlit, Gradio, LangChain.js, OpenWebUI) are open source and free. You only pay for hosting infrastructure if you deploy them. Chainlit Cloud and Streamlit Cloud offer managed hosting with free tiers. Vercel provides free Next.js hosting for small projects. Bubble is the only paid option at published pricing minimum, since it's a no-code platform that includes hosting.

Related Resources

Run Ui Frameworks For AI Agents workflows on Fast.io

50GB free storage built for AI agents. MCP-native with 251 tools, built-in RAG, and zero-config OpenClaw integration. No credit card required.