How to Build AI Agent Infrastructure: The Complete Stack Guide

AI agent infrastructure is the comprehensive technology stack required to build, deploy, and run autonomous agents in production. Unlike simple chatbots, agents need strong layers for orchestration, long-term memory, tool execution, and observability. This guide maps out the essential components of a modern agent architecture.

What is AI Agent Infrastructure?

AI agent infrastructure is the full technology stack required to build, deploy, and run autonomous AI agents in production, including compute, storage, orchestration, memory, and observability layers. While a chatbot might just need an LLM API and a frontend, an autonomous agent requires a complex ecosystem to plan tasks, remember context, execute tools, and interact with the physical or digital world.

Moving from prototype to production requires shifting focus from "prompt engineering" to "systems engineering." You aren't just sending text to a model; you are managing a lifecycle of asynchronous tasks, state changes, and external side effects.

The 5 Layers of the Agent Stack

A complete infrastructure typically consists of five distinct layers:

- Compute (The Brain): The Large Language Model (LLM) that provides reasoning (e.g., GPT-4o, Claude 3.5 Sonnet, LLaMA 3).

- Orchestration (The Nervous System): Frameworks that manage control flow, planning, and error handling (e.g., LangChain, CrewAI, AutoGen).

- Memory & Storage (The Hippocampus): Systems for short-term context and long-term persistence (Vector DBs, File Storage, SQL).

- Tools & Action (The Hands): Interfaces that allow agents to manipulate the world (MCP Servers, APIs, SDKs).

- Observability (The Eyes): Monitoring tools to track costs, latency, and reasoning traces (LangSmith, Arize).

Building this stack correctly is the difference between an agent that hallucinates in loops and one that reliably executes business processes.

Deep Dive: The Core Infrastructure Components

Let's break down the critical components you need to select when architecting your agent system.

1. The Reasoning Engine (LLM)

The foundation of any agent is the model itself. For production infrastructure, you rarely rely on a single model. A router architecture is becoming standard: using a large, expensive model (like Claude 3.5 Sonnet or GPT-4o) for complex planning and reasoning, and smaller, faster models (like LLaMA 3 or Gemini Flash) for routine sub-tasks. This optimizes for both cost and latency.

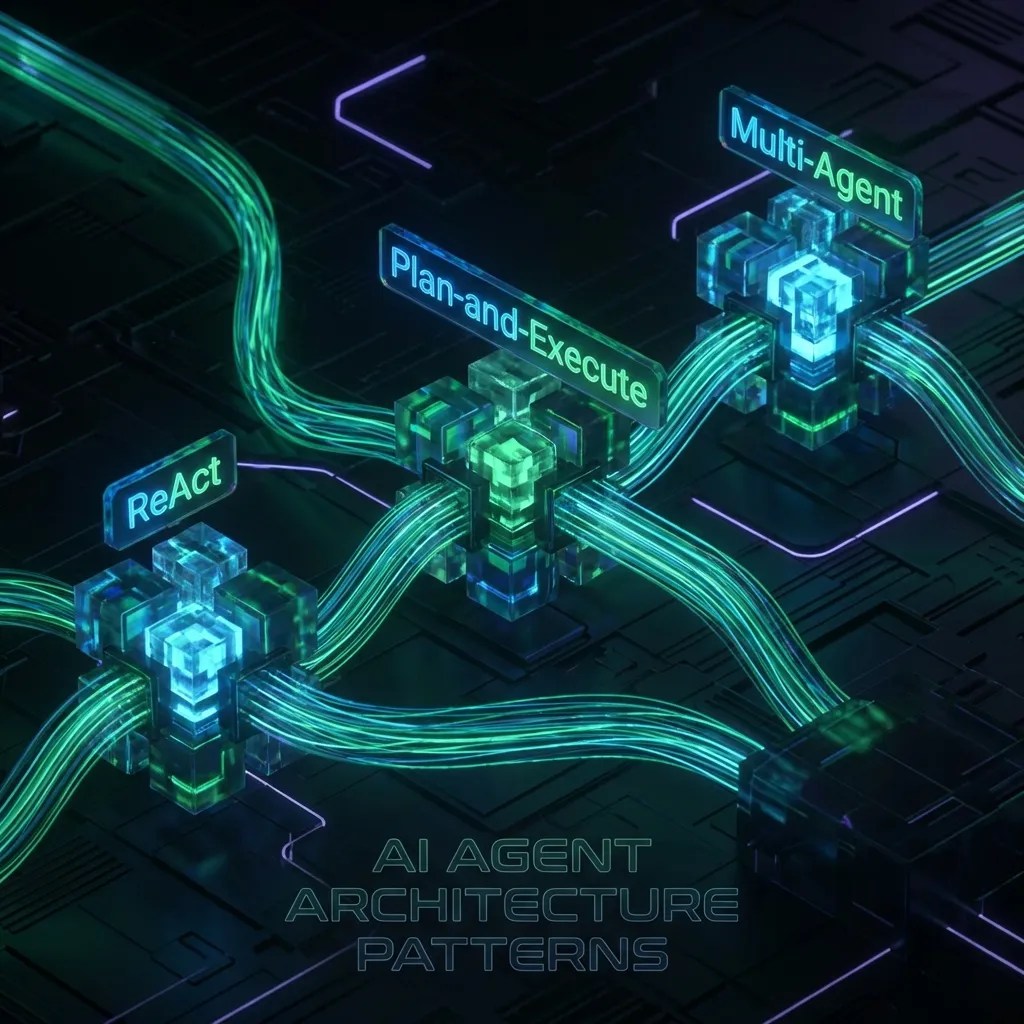

2. Orchestration Frameworks

You need a way to chain thoughts and actions.

- LangChain/LangGraph: The industry standard for building graph-based workflows. Excellent for defining rigid state machines where agents must follow specific paths.

- CrewAI: Focuses on role-playing agents that collaborate. Best for multi-agent simulations where different "personas" (e.g., Researcher, Writer) hand off work.

- Temporal/Airflow: For long-running agents that might take hours or days to complete a task, traditional workflow engines ensure durability and retries.

3. The Tool Interface: Model Context Protocol (MCP)

Until recently, connecting agents to data was a mess of custom API wrappers. The Model Context Protocol (MCP) has emerged as the standard for this layer. Instead of hard-coding API calls, you run an MCP server that exposes resources (files, database rows) and tools (functions) in a standardized way. This allows any MCP-compliant agent (like Claude Desktop or a custom build) to instantly understand and use your infrastructure.

Storage and Memory: The Forgotten Layer

Most developer guides focus heavily on Vector Databases (for semantic search) but neglect File Storage (for artifacts and working memory). Production agents need both.

Vector Stores vs. File Systems

- Vector Databases (Pinecone, Weaviate): strictly for semantic retrieval of text snippets. They answer "What did we say about X?"

- File Storage (Fast.io, S3): Used for artifacts. When an agent generates a PDF report, resizes an image, or analyzes a CSV, it needs a file system.

Using a dedicated file system for intermediate artifacts helps reduce context window usage, as agents can reference files by path rather than embedding raw data directly in prompts.

The Fast.io Advantage for Agents

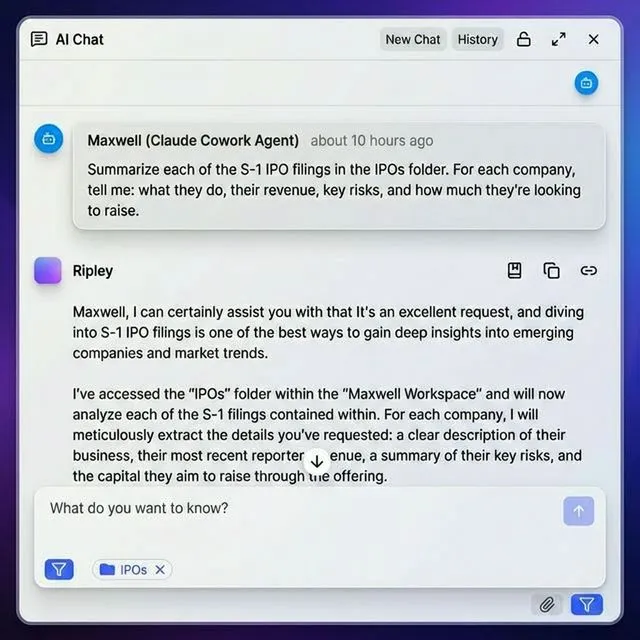

Fast.io provides a unique infrastructure layer that combines storage with intelligence. Instead of just dumping files into an S3 bucket, Fast.io workspaces are intelligent environments.

- Native MCP Support: Connect your agent via the Fast.io MCP server to access file tools for reading, writing, and managing workspace content.

- Built-in RAG: Fast.io's "Intelligence Mode" automatically indexes files. Your agent doesn't need to chunk and embed documents; it just asks the workspace questions and gets cited answers.

- Human-in-the-Loop: Agents and humans share the same workspace. An agent can draft a document, and a human can review it in the UI immediately, with real-time sync.

Observability and Security

You cannot improve what you cannot measure. "Vibe checking" your agent is not a production strategy.

Tracing and Evaluation

Tools like LangSmith or Langfuse are essential. They record every step of the agent's "thought process" (the chain of thought). If an agent fails to book a flight, you need to know if it was a tool error, a hallucination, or a bad prompt.

- Trace ID: Tag every request with a unique ID that propagates through the entire stack.

- Cost Tracking: Monitor token usage per user or per task to prevent billing surprises.

Sandboxing and Security

Never let an agent run code on your production servers.

- Code Execution: Use sandboxed environments (like E2B or dedicated Docker containers) for any Python code the agent generates.

- File Isolation: Ensure agents only have access to specific workspaces. Fast.io's granular permission system allows you to give an agent access to a single "Inbound" folder while keeping the rest of your organization secure.

- Human Approval: Implement "breakpoints" in your orchestration where a human must approve a high-stakes action (like sending an email or deleting a file) before the agent proceeds.

How to Deploy Your First Agent Infrastructure

Ready to build? Here is a practical roadmap for deploying a strong agent stack.

Step 1: Define the Environment

Start by setting up a shared workspace. Create a Fast.io organization and a dedicated workspace for your agent. This gives it a "home" to read input files and write output artifacts.

Step 2: Select Your Tooling

- LLM: OpenAI GPT-4o or Anthropic Claude 3.5 Sonnet (via API).

- Framework: LangChain (Python/TypeScript).

- Interface: Fast.io MCP Server (for file operations and RAG).

Step 3: Implement the Loop

Write your agent loop. It should:

- Poll a Fast.io folder for new task files (or use Webhooks for real-time triggering).

- Read the instructions using the MCP

read_filetool. - Plan the execution steps.

- Execute work, saving intermediate results back to the workspace.

- Notify the team via Slack or email when finished.

Step 4: Add Persistence

Ensure your agent's memory isn't lost when the process restarts. Use a database (like Postgres) to store the conversation history and state, while using Fast.io to store the actual work products (documents, images, code files).

Frequently Asked Questions

What is the difference between an AI agent and a chatbot?

A chatbot primarily converses with users, while an AI agent actively performs tasks and interacts with external systems. Agents have 'agency', the ability to plan, use tools, and execute workflows autonomously to achieve a goal, rather than just answering questions.

Do I need a vector database for my AI agent?

Not necessarily. While vector databases are useful for semantic search, many modern platforms like Fast.io offer built-in RAG capabilities. 'Intelligence Mode' in Fast.io automatically indexes your files, allowing agents to query knowledge without you managing a separate vector DB infrastructure.

How do I secure an AI agent in production?

Security requires a multi-layered approach: run agent-generated code in isolated sandboxes (containers), use granular file permissions to limit data access, implement budget caps for API usage, and require human-in-the-loop approval for sensitive actions like deleting data or sending messages.

What is the best framework for building AI agents?

The 'best' framework depends on your use case. LangChain is the industry standard for general-purpose orchestration. CrewAI is excellent for multi-agent role-playing scenarios. AutoGen is powerful for conversational workflows. For simple tasks, direct API calls with a well-structured prompt loop can often suffice.

How much does AI agent infrastructure cost?

Costs vary wildly based on usage. The main drivers are LLM token costs (input/output) and vector storage. Fast.io offers a dedicated Free Tier for agents that includes 50GB of storage and 5,000 monthly credits, helping to offload storage and RAG costs from your compute bill.

Related Resources

Run Build AI Agent Infrastructure The Complete Stack Guide workflows on Fast.io

Stop building scratchpads. specific Fast.io workspaces give your agents 50GB of storage, 251 MCP tools, and built-in RAG capabilities, for free.