How to Implement Human-in-the-Loop for AI Agents

Human-in-the-loop (HITL) for AI agents is a design pattern where autonomous agents escalate decisions, request approvals, or hand off work to humans at defined checkpoints. This guide covers how to architect approval workflows, implement safe file-based handoffs, and maintain oversight without slowing down your automation pipeline.

What is Human-in-the-Loop (HITL) for Agents?

Human-in-the-loop (HITL) for AI agents is a systems architecture where autonomous agents are designed to pause, escalate, or collaborate with humans during their execution cycle. Unlike standard automation that runs start-to-finish, HITL workflows treat human judgment as a specialized "tool" that the agent can call upon when confidence is low or risks are high.

This is distinct from "human-on-the-loop," where humans passively monitor a running system. In a true HITL architecture, the human is an active dependency for specific state transitions, such as moving a document from "draft" to "published" or authorizing a payment over a certain threshold.

For developers building agentic systems, HITL is often the bridge between a prototype that mostly works and a production system that can be trusted with sensitive data. It combines the speed and scale of AI with the nuance and accountability of human decision-making.

Helpful references: Fast.io Workspaces, Fast.io Collaboration, and Fast.io AI.

Why AI Agents Need Human Oversight

While the goal of agentic AI is autonomy, total independence is rarely desirable for high-stakes business processes. Integrating a human loop solves three key challenges:

- Error Mitigation: Agents can hallucinate or misinterpret ambiguous instructions. A human review step acts as a quality gate, catching errors before they propagate downstream.

- Accountability: For regulated industries or sensitive client interactions, a human "signer" is often legally or operationally required. Most businesses require human approval for AI actions involving sensitive personal data.

- Edge Case Handling: Agents trained on historical data may fail when encountering novel situations. A "low confidence" trigger allows the agent to defer to a human rather than failing silently or making a bad guess.

Core HITL Design Patterns

Implementing HITL isn't just about pausing a script. It requires specific architectural patterns. Here are the three most common approaches:

1. The Approval Gate In this pattern, the agent completes a unit of work (e.g., generating a report) and places it in a holding state. It cannot proceed to the next step (e.g., emailing the report) until a human signal is received. This is the most common pattern for content generation and financial transactions.

2. The Escalation Trigger The agent operates autonomously by default but monitors its own confidence metrics. If a variable falls below a defined threshold, the agent triggers an escalation event. This "management by exception" keeps humans out of the loop for routine tasks but brings them in for difficult ones.

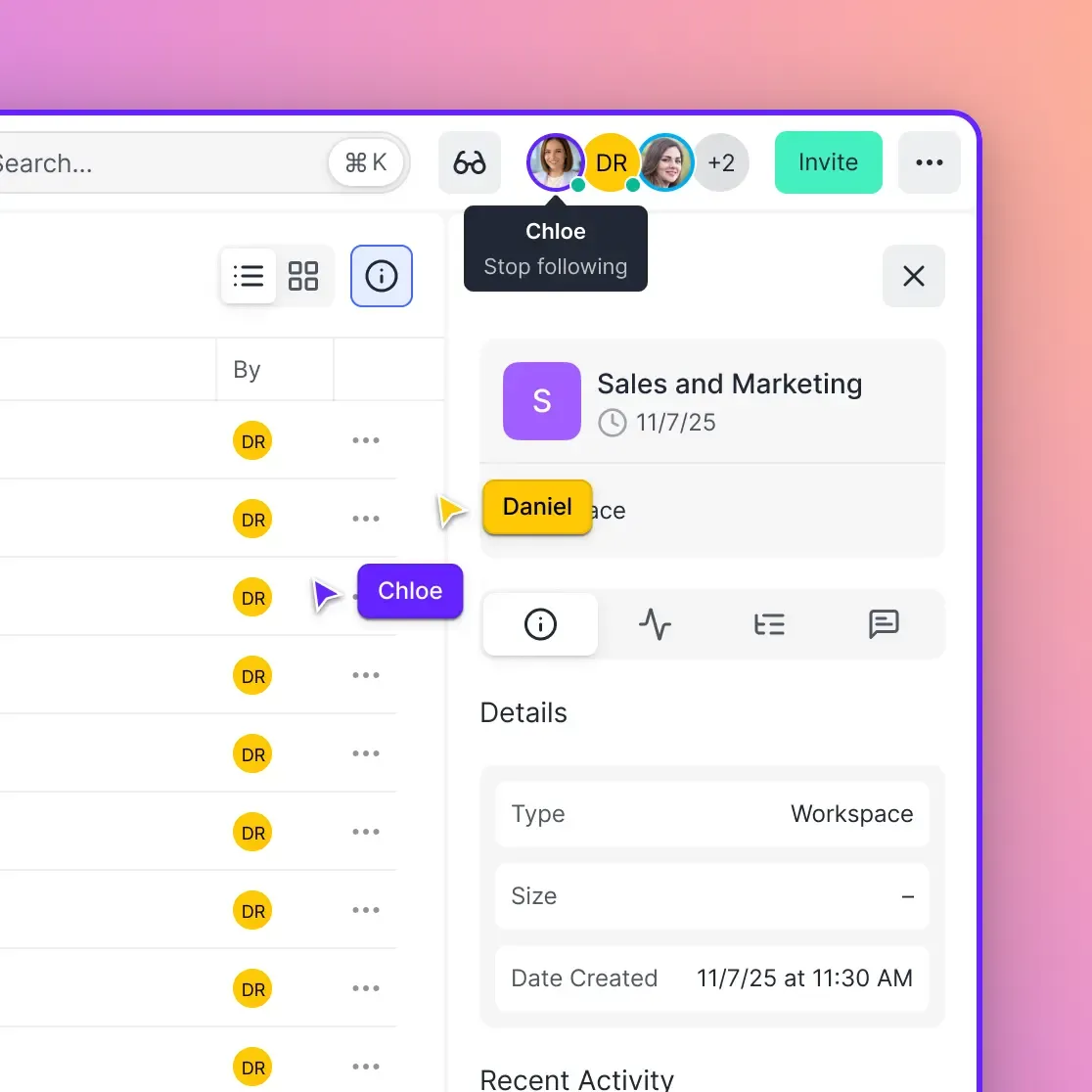

3. The Collaborative Workspace Instead of a linear handoff, agents and humans work in parallel within a shared environment. An agent might organize raw files while a human editor selects the best shots. This requires a shared state, typically a cloud file system, where both parties can read and write assets simultaneously.

Give Your AI Agents Persistent Storage

Create shared workspaces where agents and humans collaborate. Free 50GB storage for your agents.

How to Build HITL Workflows with Fast.io

Fast.io provides the ideal infrastructure for HITL workflows because it treats AI agents and humans as equal citizens with access to the same storage. Here is how to architect a file-based handoff:

Using Shared Folders as State Machines You can use directory structures to represent workflow states.

- Input: The agent reads from a

raw_data/folder. - Processing: The agent processes the data and writes the result to a

needs_review/folder. - Notification: The agent uses a webhook or notification tool to alert the human team.

- Review: A human reviews the file. If it looks good, they move it to

approved/. If not, they move it torejected/with comments. - Completion: The agent watches the

approved/folder and executes the final delivery step (e.g., uploading to a client portal).

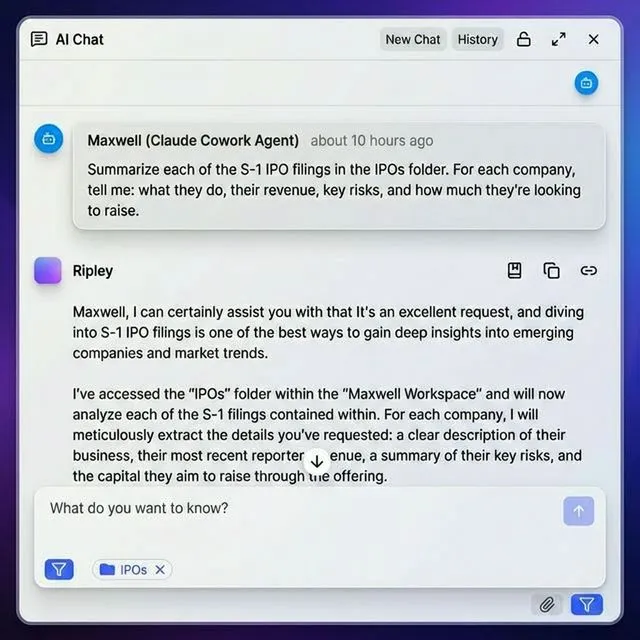

The "Reviewer" Pattern with MCP

Using the Fast.io Model Context Protocol (MCP) server, your agent can actively check for human feedback. The agent can list files in the feedback/ directory to see if a human has provided natural language notes on a previous draft, then read those notes to refine its next iteration.

Permissions and Safety

Fast.io allows you to grant different permission levels. You might give your agent write access to drafts/ but only read access to published/. This ensures the agent physically cannot overwrite final production assets, enforcing the human loop at the infrastructure level.

Best Practices for Smooth Handoffs

To make human-agent collaboration effective, reduce the cognitive load on the human reviewer.

- Preserve Context: When an agent hands off a task, it should include a summary file (e.g.,

_context.md) explaining what it did, why it made those choices, and what needs review. - Clear Signaling: Use file naming conventions or metadata to indicate status. A file named

draft_waiting_approval.docxis self-explanatory. - Timeouts: Design your agents to handle human latency. If a human doesn't approve a request within a set time window, should the agent send a reminder, fail safely, or proceed with a default action?

- Feedback Loops: The data from human corrections shouldn't be lost. Store the "before" (agent draft) and "after" (human correction) versions to create a dataset for fine-tuning the agent in the future.

Frequently Asked Questions

What is the difference between human-in-the-loop and human-on-the-loop?

Human-in-the-loop (HITL) requires active human intervention for the process to proceed or complete, often acting as a mandatory approval gate. Human-on-the-loop involves humans monitoring an automated system with the power to intervene if necessary, but the system can otherwise run autonomously without constant approval.

Does HITL slow down AI agents?

Yes, by definition, HITL introduces human latency into an automated process. However, this trade-off is often necessary for accuracy and safety. To minimize delays, use HITL only for 'low confidence' or 'high risk' actions (the Escalation Trigger pattern) rather than checking every routine task.

How can I automate the notification for human review?

You can use Fast.io's event system or a simple webhook integration. When an agent writes a file to a specific 'review' folder, it can trigger a notification to Slack, Microsoft Teams, or email, alerting the human that a task is waiting for their attention.

What tools do I need for a basic HITL workflow?

You need a shared state environment (like Fast.io storage), an AI model or agent framework, and a notification channel. Fast.io simplifies this by providing the shared file system that both agents (via MCP) and humans (via web/mobile app) can access securely.

Can HITL improve my AI agent over time?

. Every human correction is a labeled data point. By saving the agent's original output alongside the human's corrected version, you create a perfect dataset for Reinforcement Learning from Human Feedback (RLHF) to fine-tune your agent.

Related Resources

Give Your AI Agents Persistent Storage

Create shared workspaces where agents and humans collaborate. Free 50GB storage for your agents.