Accelerated File Transfer: Why Traditional Protocols Fail and How to Fix Them

Accelerated file transfer replaces slow TCP-based protocols with optimized alternatives that push bandwidth utilization from 10-20% up to 95%. This guide covers the physics behind TCP slowdowns, the four main acceleration approaches, benchmarks you can expect, and how to pick the right solution for your team.

What Is Accelerated File Transfer?

Accelerated file transfer is a set of technologies that move large files across networks faster than standard protocols allow. The term covers everything from custom UDP-based protocols to cloud-native storage architectures, but the goal is always the same: use more of your available bandwidth.

The problem they solve is straightforward. Traditional file transfers rely on TCP (Transmission Control Protocol), which was designed in the 1980s for reliability, not speed. TCP works fine on a local network. But when you send a 50 GB video file from New York to Singapore, TCP interprets the natural network delay as congestion and throttles itself. According to JSCAPE's documentation on accelerated transfer protocols, standard TCP uses only 10-20% of available bandwidth on high-latency connections.

That means most of your paid bandwidth goes to waste on cross-ocean transfers.

Accelerated file transfer solves this by replacing TCP (or working around it) with protocols that separate latency from congestion. The sender keeps pushing data at full speed, handles lost packets intelligently, and fills the pipe regardless of distance.

The Physics of Why TCP Slows Down

To understand why acceleration works, you need to know what goes wrong with TCP over long distances. The slowdown is not a bug. It is built into the protocol.

How TCP Acknowledgments Create a Bottleneck

TCP transfers data as a sequence of packets. After sending a batch, the sender waits for the receiver to confirm receipt (called an ACK) before sending the next batch. The number of unacknowledged packets TCP allows in flight at once is called the "window size."

On a local network, ACKs return almost instantly. The window fills and empties so fast that throughput stays high. But add distance and the math changes.

Light travels through fiber at roughly 200,000 km/s. A signal from New York to London creates a round-trip delay of approximately 60-80ms including processing at each end. During that wait, the sender sits idle unless it has enough packets queued in its window.

With a standard TCP window and moderate round-trip time, maximum throughput falls well below your actual bandwidth, according to the TCP throughput formula (window size / RTT). Modern operating systems use larger windows, but the principle holds. The further data travels, the longer ACKs take, the more bandwidth goes unused.

Congestion Control Makes It Worse

TCP was designed to be a good citizen on shared networks. Its congestion control algorithms (Reno, Cubic, BBR) reduce sending speed whenever they detect signs of trouble. The problem is that TCP cannot tell the difference between actual congestion and high latency from distance.

High latency looks like congestion to TCP. So it backs off. On an intercontinental link, TCP starts slow, ramps up cautiously, and then backs off at the first sign of delay. The result is a transfer that never gets close to the actual capacity of the connection.

Packet Loss: The Multiplier

Real networks lose packets. Routers drop them when queues fill up. Wireless links corrupt them. Even fiber has error rates. TCP responds to packet loss by assuming the network is overwhelmed. It cuts its sending window in half and slowly ramps back up.

According to research from the Mathis equation on TCP throughput, even 1% packet loss can reduce TCP throughput by 50% or more on high-latency links. High latency combined with packet loss is where TCP breaks down completely.

That is the gap accelerated transfer technologies aim to close.

Four Approaches to Accelerated Transfer

Acceleration is not one technology. There are four distinct approaches, each with different trade-offs. Which one fits depends on your transfer patterns, infrastructure, and budget.

1. UDP-Based Protocols with Custom Reliability

UDP-based acceleration is the most common method. Instead of TCP, these tools build a custom protocol on top of UDP (User Datagram Protocol). UDP does not wait for acknowledgments. It fires packets as fast as the sender can produce them.

Raw UDP is unreliable. Packets get lost and nobody knows. So accelerated transfer solutions add their own reliability layer. They break files into numbered blocks, transmit continuously, track which blocks arrive, and retransmit only the missing ones. The key difference from TCP: retransmission happens selectively without slowing down the rest of the transfer.

How it works in practice:

- The sender breaks the file into fixed-size blocks

- Blocks stream over UDP at near-line rate

- The receiver tracks which blocks arrived intact

- Missing blocks get requested and retransmitted in parallel with new data

- The protocol monitors actual congestion (router queue depth) separately from latency

Products using this approach include Signiant (used heavily in media and entertainment), FileCatalyst by Fortra, and IBM's Aspera with its FASP protocol. These solutions routinely achieve 90-95% bandwidth utilization regardless of distance.

Best for: Regular, high-volume transfers between known locations. Media companies moving dailies. Research institutions sharing datasets.

Drawback: Both ends need compatible software installed. You cannot send to someone who does not have the client.

2. Parallel TCP Streams

Instead of replacing TCP, this approach opens many TCP connections at once. Each individual connection still suffers from the latency bottleneck, but many simultaneous streams can collectively approach full bandwidth utilization.

Think of it like lanes on a highway. One lane (one TCP stream) has a speed limit set by latency. But if you open many lanes, the total traffic flow increases proportionally.

GridFTP, used widely in scientific computing, pioneered this approach. Some cloud sync clients and managed file transfer products also use multi-stream TCP internally.

Best for: Environments where deploying custom UDP protocols is not possible. Transfers through firewalls that block non-TCP traffic. Quick performance improvements without protocol changes.

Drawback: Diminishing returns after a certain number of streams. Each connection consumes memory and CPU. Less effective than UDP-based solutions at high latencies.

3. WAN Optimization Appliances

WAN optimization uses hardware or virtual devices that sit between your network and the internet. They intercept traffic and apply acceleration techniques like compression, deduplication, protocol optimization, and caching.

Companies like Riverbed (now Alluvio) and Citrix SD-WAN offer these solutions. The appliance at one end compresses and optimizes outgoing traffic. A matching appliance at the other end decompresses and reassembles it.

Best for: Enterprises with dedicated links between offices that want to accelerate all traffic, not just file transfers. Organizations already invested in WAN infrastructure.

Drawback: Expensive. Requires hardware at both ends. Does not help with transfers to external parties who do not have matching equipment.

4. Cloud-Native Architecture

Cloud-native is the newest approach and works differently from the others. Instead of accelerating the transfer between two endpoints, these platforms eliminate point-to-point transfers entirely.

Files upload once to cloud infrastructure optimized for ingestion (chunked uploads, automatic retry, parallel streams). Once stored, files are accessed via streaming or download links from geographically distributed servers. A recipient in Tokyo downloads from a nearby server, not from your office in Los Angeles.

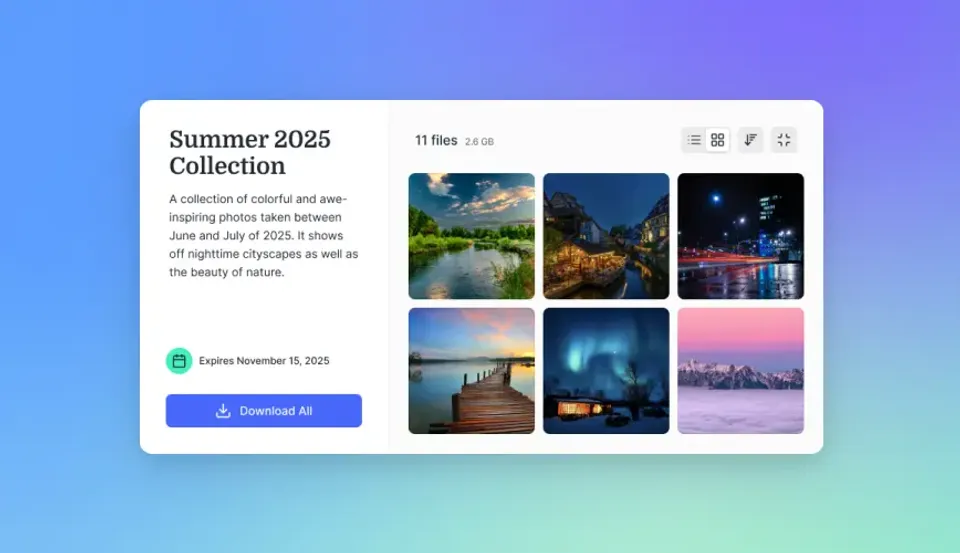

Fast.io uses this approach. Files upload with chunked transfers that resume automatically if interrupted. Once stored, content streams on-demand via HLS (HTTP Live Streaming) for video. Recipients access files through links in their browser without installing any software.

Best for: Teams sharing files with external clients and partners. Distributed teams that need ongoing access to shared files. Workflows where the same file gets accessed by many people.

Drawback: Requires uploading to a third party's cloud. Initial upload speed depends on your connection to the cloud provider. Not as fast as dedicated UDP solutions for massive one-time transfers between two fixed points.

Head-to-Head Comparison of Acceleration Methods

Choosing the right approach depends on your specific transfer patterns. The four methods compare like this.

UDP-based protocols (Signiant, Aspera, FileCatalyst)

- Speed: 90-95% of available bandwidth regardless of distance

- Setup: Software installation required on both ends

- Cost: Per-seat or volume-based licensing

- External sharing: Requires recipients to install client software

- Best metric: Raw transfer speed over long distances

Parallel TCP streams (GridFTP, multi-threaded clients)

- Speed: Moderate bandwidth utilization, depending on stream count

- Setup: Moderate. Some tools require configuration

- Cost: Often included in existing tools or open-source

- External sharing: Works with standard infrastructure

- Best metric: Improvement without protocol changes

WAN optimization appliances (Riverbed, Citrix SD-WAN)

- Speed: Several-times improvement on all WAN traffic

- Setup: Hardware deployment at each location

- Cost: Significant capital expenditure for enterprise deployments

- External sharing: Only between locations with appliances

- Best metric: Total WAN traffic improvement

Cloud-native (Fast.io, modern cloud storage)

- Speed: Upload limited by your connection; downloads from edge servers are fast globally

- Setup: No installation. Browser-based

- Cost: Usage-based, starting free

- External sharing: Recipients access via browser link, no software needed

- Best metric: Time-to-access for distributed teams

For teams that regularly ship large files between fixed locations (a studio sending dailies to a post house), UDP-based solutions provide the best raw performance. For teams sharing files with clients, partners, and distributed collaborators, cloud-native platforms reduce friction and work for everyone without software installs.

Share Files Without Limits on Fast.io

Fast.io's cloud-native platform streams video 50-60% faster with HLS and handles chunked uploads with automatic resume. No client software required. Free plan with 10,000 monthly credits.

When Do You Actually Need Acceleration?

Not every file transfer problem requires acceleration technology. Use these criteria to decide.

You Probably Need Acceleration If:

- File sizes are regularly in the tens of gigabytes or larger. Small files transfer quickly even with TCP's limitations. The bottleneck only becomes painful with large payloads.

- Your team spans continents. High latency on intercontinental links cripples TCP throughput. Local transfers within a city do not have this problem.

- You are paying for bandwidth you cannot use. If your transfers top out at a fraction of your connection speed, you are wasting what you pay for.

- Time-sensitive workflows depend on file delivery. Video production deadlines, research collaboration, and manufacturing iterations all suffer when files arrive hours late.

- Your network is unreliable. Satellite links, mobile connections, and congested internet paths with high packet loss make TCP nearly unusable for large files.

Standard Transfer Is Probably Fine If:

- Files are small. Even inefficient TCP finishes a modest transfer in seconds on a decent connection.

- Everyone is on the same local network. Low latency means TCP performs at near-line rate.

- Transfers are occasional. Setting up acceleration infrastructure is not worth the effort for a monthly file exchange.

- Your connection is relatively slow. On slower links, TCP can usually saturate the available bandwidth even with moderate latency.

The Middle Ground: Cloud Storage

Many teams fall between these extremes. They send moderately large files, work with people in other cities (not other continents), and need something faster than email attachments but do not want to deploy Aspera.

Cloud-native storage platforms fill this gap. You upload once, share a link, and recipients download from the nearest server. There is no point-to-point transfer to accelerate because the file is already in the cloud. For video files specifically, HLS streaming means recipients can start watching immediately without waiting for the full download.

Setting Up Your First Accelerated Workflow

If you have decided acceleration makes sense, the setup process varies by approach.

Cloud-Native Approach (fast to Deploy)

- Sign up for a cloud storage platform. Fast.io offers a free plan with 10,000 monthly credits and no credit card required.

- Create a workspace for your team or project. Invite collaborators so everyone has access.

- Upload files using chunked uploads. The platform handles retry and resume automatically.

- Share via link. Recipients open a browser link, preview files inline (including video streaming), and download what they need.

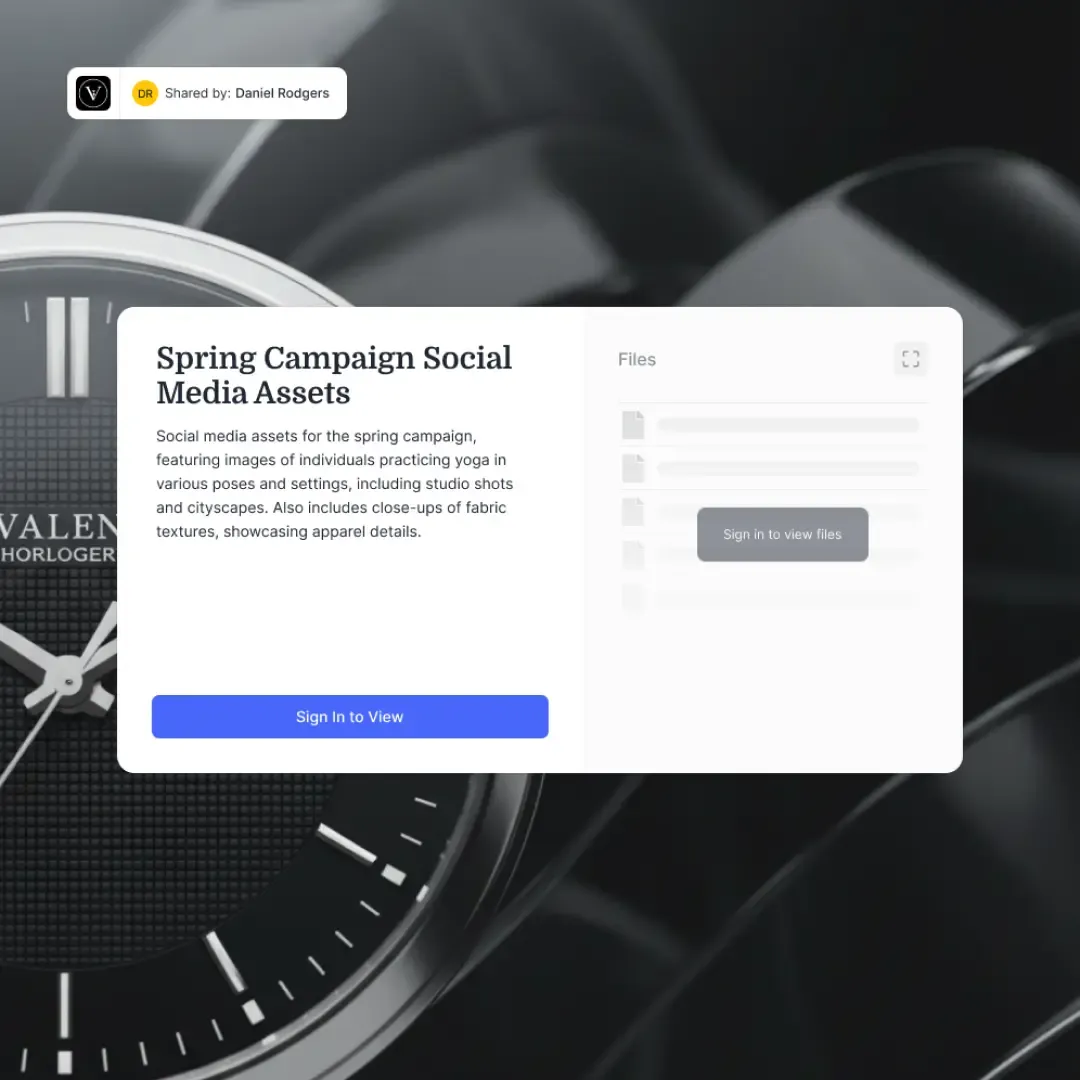

- Set permissions. Control who can view, download, or upload. Add password protection and expiration dates for sensitive files.

This approach works in under ten minutes. Upload speed depends on your connection to the cloud provider, but downloads are fast globally because content is served from distributed infrastructure.

UDP-Based Protocol Setup

- Choose a product. Signiant, FileCatalyst, and Aspera are the major options. Each offers trial licenses.

- Install server software at the sending location, typically on a Linux or Windows server.

- Install client software on recipient machines. Some products offer web-based portals as an alternative.

- Configure network rules. UDP-based protocols need specific ports opened in firewalls at both ends.

- Test with representative files. Run transfers with actual file sizes over your actual network path. Compare speeds to your baseline TCP transfers.

- Automate. Use the product's API or scheduling tools to trigger transfers based on file events or schedules.

Enterprise rollouts with UDP-based solutions can take days to weeks depending on infrastructure complexity.

Parallel TCP

Configuration 1. Install a multi-stream client. Tools like lftp, aria2, or GridFTP support parallel connections. 2. Configure stream count. Configure multiple parallel streams to reduce the impact of latency. Test to find the optimal number that maximizes throughput without overloading systems. 3. Test and adjust. Monitor CPU, memory, and network utilization during transfers. Find the stream count that maximizes throughput without overloading your systems.

Real-World Performance Benchmarks

Marketing claims in this space are aggressive. "100x faster" appears on every product page. The actual numbers tell a more grounded story.

TCP Baseline Performance

The Mathis equation gives a theoretical maximum for TCP throughput:

Throughput = (MSS / RTT) x (1 / sqrt(loss))

Where MSS is the maximum segment size (typically 1,460 bytes), RTT is round-trip time, and loss is the packet loss rate. On a connection with 100ms RTT and 0.1% packet loss, maximum TCP throughput is about 37 Mbps according to the Mathis equation, regardless of your actual bandwidth.

In practice, modern TCP implementations with window scaling do better than this formula suggests. But the trend holds: throughput drops dramatically as latency and loss increase.

Accelerated Transfer Performance

According to Signiant's published benchmarks, their acceleration technology achieves 90-95% of available bandwidth on connections with up to 200ms latency and 5% packet loss. FileCatalyst publishes similar numbers.

On a 1 Gbps connection with 100ms latency:

- Standard TCP: 100-300 Mbps (10-30% utilization)

- Multi-stream TCP (16 streams): 500-700 Mbps (50-70% utilization)

- UDP-based acceleration: 900-950 Mbps (90-95% utilization)

The "100x faster" claim comes from comparing worst-case TCP (high latency, significant packet loss, small windows) against optimized acceleration. According to accelerated transfer benchmarks, under real-world conditions expect 5-20x improvement. That still matters a lot when your 4-hour transfer finishes in 15 minutes.

Cloud-Native Performance

Cloud platforms measure differently because the transfer model is different. Upload speed depends on your connection to the cloud provider's nearest ingress point. Download speed depends on the recipient's proximity to a CDN edge server.

For Fast.io specifically, HLS video streaming delivers content 50-60% faster than progressive download because playback starts immediately and the stream adapts to the viewer's connection speed. File downloads benefit from multi-region storage and CDN distribution.

The practical advantage of cloud-native is not raw transfer speed between two points. It is eliminating redundant transfers entirely. Upload once, share with 50 people, and each person downloads from a nearby server.

Security Considerations for Fast Transfers

Speed is meaningless if your data is compromised in transit. Every acceleration approach has different security characteristics.

Encryption All reputable accelerated transfer products encrypt data in transit. UDP-based solutions typically use AES-256 encryption applied at the application layer, since UDP itself has no encryption. Cloud platforms use TLS for data in transit and encrypt data at rest on their servers.

Fast.io encrypts data both at rest and in transit, with granular permissions at the organization, workspace, folder, and file levels. Audit logs track all activity, including views, downloads, and permission changes.

Authentication and Access Control

Cloud-native platforms typically offer the most flexible access controls. You can share files via password-protected links, restrict access to specific email domains, set expiration dates, and revoke access instantly. Enterprise features like SSO/SAML integration (supporting providers like Okta, Azure AD, and Google) add another layer of security.

UDP-based tools rely on user accounts and API keys. Access control is coarser, typically at the folder or transfer job level rather than per-file.

The Firewall Problem

UDP-based protocols need specific ports opened in firewalls. This creates friction with IT security teams who prefer to lock down non-standard traffic. Some products solve this by falling back to TCP through standard HTTPS ports (443), but at the cost of reduced acceleration.

Cloud-native platforms sidestep this problem. All traffic flows over standard HTTPS. No firewall changes needed. No IT tickets. This matters when you are sharing files with external partners whose networks you do not control.

Industry-Specific Use Cases

Acceleration needs vary by industry. These are the patterns that work in practice.

Media and Entertainment

Video production generates massive files. A single day of 4K RAW footage can exceed 1 TB. Studios, post-production houses, and broadcast networks move these files constantly between locations.

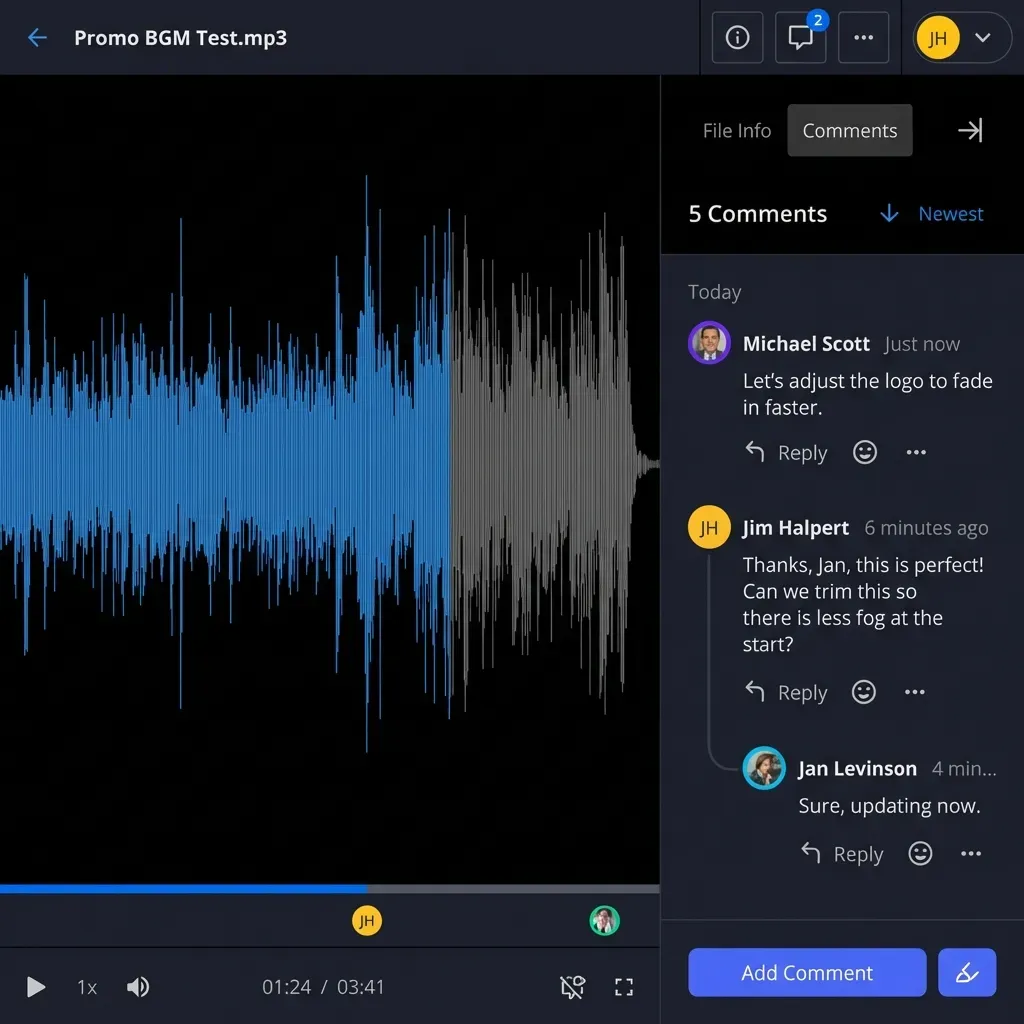

UDP-based solutions dominate here. Signiant is the de facto standard for studio-to-studio transfers. But the workflow is shifting. More teams use cloud-native platforms for review and approval workflows where stakeholders need to watch footage without waiting for full downloads. HLS streaming lets directors review dailies from anywhere, with playback starting in seconds rather than hours.

Scientific Research

Genomic datasets, climate models, and particle physics data regularly hit 10-100 TB. The scientific community was among the first to adopt accelerated transfer, with GridFTP becoming standard at research institutions.

The challenge is not just speed but also verification. When you transfer 50 TB of genomic sequences, you need to confirm every byte arrived intact. Accelerated solutions provide checksums at the block level, catching corruption that file-level checks might miss.

Manufacturing and Engineering

CAD files, simulation outputs, and quality documentation flow between headquarters, factories, and suppliers across time zones. The data is sensitive (trade secrets, proprietary designs) and time-critical (production schedules depend on current files).

Cloud-native platforms with secure sharing and branded portals work well here. Upload designs to a workspace, share a link with your supplier in Shenzhen, and they download from a nearby server. No VPN tunnels, no FTP servers, no overnight waits.

Legal and Financial

Deal rooms, due diligence documents, and case files are large but not enormous (typically 1-50 GB). Speed matters less than security and access control. Virtual data rooms with audit trails, watermarking, and granular permissions are more important than raw transfer speed for these workflows.

Common Mistakes When Implementing Acceleration

Teams waste money and time on acceleration projects because of a few recurring mistakes.

Optimizing the wrong bottleneck. Before buying acceleration software, run a speed test between your endpoints. If your connection is 100 Mbps and TCP is achieving 90 Mbps, acceleration will not help. Your bottleneck is bandwidth, not protocol overhead. Acceleration helps when you have unused bandwidth that TCP cannot fill.

Ignoring the last mile. Your fast office connection means nothing if your recipient is on a slow home link. Accelerated protocols cannot create bandwidth that does not exist. Cloud-native solutions partially solve this by letting recipients stream content at adaptive bitrates rather than downloading full files.

Forgetting about external recipients. Internal acceleration is straightforward. But when you need to share with a client who uses a different platform or a partner behind a restrictive firewall, the client-software requirement becomes a barrier. Cloud-native sharing via browser links removes this friction.

Overbuilding for occasional use. Deploying Aspera or Signiant for a team that sends large files twice a month is overkill. Match the solution to your actual transfer frequency. For occasional large transfers, cloud-native platforms or free file transfer services may be sufficient.

Neglecting monitoring. Without measuring transfer performance, you cannot tell if acceleration is working. Track transfer completion times, bandwidth utilization, and failure rates. Compare against your TCP baseline to quantify the improvement.

The Future of File Transfer Acceleration

Several trends are changing how fast file transfer works.

QUIC and HTTP/3. Google's QUIC protocol (now the foundation of HTTP/3) builds reliability on top of UDP, similar to what accelerated transfer products do. As QUIC adoption grows, standard web transfers will get faster without special software. QUIC handles latency and packet loss better than TCP, though it is not optimized specifically for bulk file transfer.

Edge computing and CDN expansion. Cloud providers continue building edge locations closer to end users. This reduces the effective distance data needs to travel, shrinking the latency that makes TCP slow. For cloud-native platforms, more edge locations mean faster downloads for more users.

AI-driven workflow integration. According to a 2026 report from IntelligentHQ, managed file transfer is becoming tightly integrated with AI-driven workflow automation. Files do not just transfer; they trigger processing pipelines, get indexed for search, and feed into AI analysis automatically. Fast.io's Intelligence Mode already does this. Upload a file and it is auto-indexed, searchable by meaning, and queryable through AI chat with citations.

Transfer and storage are merging. The line between "transferring a file" and "storing a file" is disappearing. Cloud-native platforms treat upload as ingestion into a persistent workspace rather than a point-to-point transfer. Once ingested, the file is accessible globally without additional transfers. This makes "accelerated transfer" less about raw protocol speed and more about reducing the number of transfers needed in the first place.

Frequently Asked Questions

What is accelerated file transfer?

Accelerated file transfer is technology that moves large files faster than traditional TCP-based protocols allow. It uses optimized methods like UDP-based protocols with custom reliability layers, parallel connections, or cloud-native architectures to overcome the speed limitations caused by network latency. These solutions can achieve 5-100x faster speeds than standard FTP or HTTP transfers, with the biggest gains on long-distance, high-bandwidth connections.

How can I speed up large file transfers?

Start by identifying your bottleneck. If your connection is fast but transfers are slow, the problem is likely TCP overhead on a high-latency link. Solutions include using UDP-based transfer software (Signiant, Aspera, FileCatalyst), switching to cloud-native storage that streams files on-demand, opening parallel TCP connections, or compressing files before transfer. For regular large transfers between fixed locations, dedicated acceleration software provides the biggest speed gains. For sharing with external collaborators, cloud-native platforms eliminate the transfer problem entirely.

What is the fast way to transfer large files?

For raw speed between two fixed points, UDP-based acceleration software (Signiant, Aspera, FileCatalyst) is fastest, achieving 90-95% of available bandwidth regardless of distance. For practical speed when sharing with multiple people, cloud-native platforms are faster overall because you upload once and recipients download from nearby servers. For large datasets (multi-terabyte), physically shipping hard drives still beats network transfer in some cases.

Why does file transfer slow down over long distances?

TCP, the protocol behind most file transfers, requires the receiver to acknowledge each batch of data before the sender continues. Over long distances, these acknowledgments take longer to return (70ms New York to London, 120ms LA to Tokyo). TCP interprets the delay as network congestion and reduces speed further. The result is that a 10 Gbps connection might deliver only 1-2 Gbps for intercontinental transfers, wasting 80-90% of available bandwidth.

Is UDP faster than TCP for file transfers?

Raw UDP is faster because it does not wait for acknowledgments. But UDP alone is unreliable for file transfers since lost packets go undetected. Accelerated transfer solutions use UDP as the transport layer and add custom reliability on top, tracking blocks, retransmitting only what is lost, and verifying integrity. The result combines UDP's speed advantage with the reliability required for file transfers.

Do I need special software for accelerated file transfer?

It depends on the approach. Traditional UDP-based solutions (Signiant, Aspera) require software installation on both sending and receiving ends. Cloud-native platforms like Fast.io work through standard browsers, with acceleration built into the cloud infrastructure. For sharing with external clients or partners, browser-based solutions avoid the friction of requiring recipients to install anything.

How much faster is accelerated file transfer than FTP?

On high-latency connections (100ms+ round-trip time), accelerated transfer is typically 5-20x faster than FTP in real-world conditions. The improvement grows with distance and bandwidth. On a 1 Gbps link with 100ms latency, FTP might achieve 100-300 Mbps while UDP-based acceleration reaches 900-950 Mbps. The commonly cited '100x faster' figure represents extreme scenarios with high latency and significant packet loss.

What is FASP protocol?

FASP (Fast, Adaptive, Secure Protocol) is IBM's proprietary accelerated transfer protocol, developed originally by Aspera. It uses UDP for data transport with a custom reliability and congestion control layer. FASP monitors actual network conditions and adjusts transmission rates without the conservative backoff behavior of TCP. It encrypts data in transit using AES-128 and is widely used in media, entertainment, and scientific research for moving large files at near-line rate.

Related Resources

Share Files Without Limits on Fast.io

Fast.io's cloud-native platform streams video 50-60% faster with HLS and handles chunked uploads with automatic resume. No client software required. Free plan with 10,000 monthly credits.