How to Design AI Agent Architecture Patterns for Production Systems

AI agent architecture patterns are reusable design structures that define how autonomous agents perceive, reason, and act within their environment. Choosing the right pattern determines your system's cost, reliability, and ability to scale before you write a single line of code. This guide covers the four essential patterns with practical implementation guidance for storage, state management, and production deployment.

What Are AI Agent Architecture Patterns?

AI agent architecture patterns are reusable design structures that define how autonomous agents perceive, reason, and act within their environment. Think of them as blueprints that separate effective production systems from expensive experiments.

Your architecture choice determines three things that compound quickly in production: cost, reliability, and scaling flexibility. A ReAct agent handling customer support might make several LLM calls per interaction in typical production deployments. A planning pattern for the same task often cuts this to fewer calls total because it creates the complete plan upfront. But planning adds complexity. The wrong choice means months of rebuilding instead of shipping.

These patterns emerged from research at Google, MIT, and production deployments at scale. They solve specific problems: how should an agent break down tasks? When should it stop iterating? How does it remember context across sessions? How do multiple agents coordinate without chaos?

The four core patterns cover most production use cases: ReAct for reasoning and acting in loops, Plan-and-Execute for complex multi-step tasks, Multi-Agent for parallel processing and specialization, and Tool-Use for extending capabilities beyond the base model. Learn more about implementing MCP servers and how Fast.io provides storage for AI agents.

Pattern Comparison: Which Architecture Fits Your Use Case?

Choosing between patterns requires understanding your task complexity, latency requirements, and failure modes. Here is how the four core patterns compare across key dimensions:

| Pattern | Best For | Latency | LLM Calls | Complexity | Failure Mode |

|---|---|---|---|---|---|

| ReAct | Interactive tasks, Q&A, debugging | Medium | Several per task | Low | Can loop indefinitely |

| Plan-and-Execute | Multi-step workflows, research | High | Few total | Medium | Plan may become stale |

| Multi-Agent | Parallel analysis, specialized tasks | Variable | Parallel streams | High | Coordination overhead |

| Tool-Use | Data retrieval, calculations | Low | Few per tool | Low | Tool errors propagate |

The ReAct pattern excels when tasks require adaptation. The agent reasons about what to do, takes an action, observes the result, and repeats. According to research from Princeton University and Google, implementing a structured architecture like ReAct can improve reliability in dynamic environments by over 30% compared to zero-shot prompting.

Multi-agent systems can handle 5x more complex tasks than single agents by distributing work across specialized components. But they introduce coordination challenges. The marketing team example is cautionary: an agent stuck in a search loop racked up thousands in API costs before anyone noticed.

For most production systems, start with ReAct or Tool-Use. Add planning when you see repeated patterns across sessions. Move to multi-agent only when you have clear task decomposition and monitoring in place.

The ReAct Pattern: Reasoning and Acting in Loops

The ReAct pattern combines reasoning and action in an iterative loop. The agent generates a "Thought" about what to do next, takes an "Action" like searching a database or calling an API, receives an "Observation" from that action, and repeats until the task is complete.

This pattern works best when the environment is partially observable or changing. The agent adapts its plan based on new observations rather than following a fixed sequence. Customer support is a classic use case: the agent searches documentation, finds an answer, realizes it is incomplete, searches again with refined terms, and continues until the query is resolved. The original ReAct paper by Yao et al. demonstrated this approach across multiple reasoning benchmarks.

Implementation Structure:

while not task_complete:

thought = llm.reason(current_context)

action = llm.act(thought)

observation = execute_action(action)

context.append(thought, action, observation)

The loop continues until the agent determines the task is complete or hits a maximum iteration limit. Without that limit, you risk infinite loops and runaway costs.

ReAct requires state management between iterations. Each step's context must persist so the agent remembers what it already tried. For production deployments, store this state in persistent storage rather than memory. If your agent restarts mid-task, it should resume from where it left off.

Fast.io's MCP server provides 251 tools that integrate naturally with ReAct patterns. Agents can search workspaces, read files, and write results using the same tools whether they are on their first iteration or their twentieth. Webhooks notify downstream systems when the agent completes its loop, enabling reactive workflows without polling.

The main risk with ReAct is the loop running away. Implement circuit breakers: maximum iterations, cost limits per session, and human-in-the-loop escalation triggers. Log every thought, action, and observation for debugging when things go wrong.

Plan-and-Execute: Decomposing Complex Tasks

The Plan-and-Execute pattern separates planning from execution. First, the agent creates a complete plan breaking the task into steps. Then it executes each step in sequence. This reduces total LLM calls compared to ReAct because planning happens once upfront rather than iteratively.

Research tasks benefit from this pattern. A financial analysis agent might plan steps like: gather quarterly reports, extract revenue metrics, calculate growth rates, identify anomalies, and draft a summary. Each step is explicit. The agent knows the full scope before starting.

When to Use Plan-and-Execute:

- Tasks with clear sub-task decomposition

- Workflows where you want visibility into progress

- Situations where plan validation is possible before execution

- Multi-step processes that humans review mid-flight

The pattern has drawbacks. Plans can become stale if the environment changes during execution. A step that depends on external data might fail if that data updates between planning and execution. The agent lacks the adaptive flexibility of ReAct.

Hybrid approaches work well. Plan for the known structure, but allow ReAct-style adaptation within individual steps. A document processing agent plans the overall workflow, but uses ReAct within the "extract data" step to handle varying document formats.

State management is critical. Store the plan, current step, and intermediate results persistently. If execution pauses, the agent should resume at the correct step with all previous context intact. File locks prevent multiple agent instances from executing the same plan simultaneously.

Fast.io supports this pattern through workspace organization. Each plan step can write to a specific folder, creating an audit trail of progress. Intelligence Mode indexes these outputs, enabling semantic search across intermediate results. Agents query prior steps with natural language: "What growth rate did we calculate in the previous step?"

Multi-Agent Architecture: Coordination and Specialization

Multi-agent systems distribute tasks across multiple specialized agents. One agent researches, another analyzes, a third writes summaries. They communicate through shared state, message passing, or a central orchestrator.

This pattern handles complexity that overwhelms single agents. Analyzing a market opportunity might require parallel analysis of competitor pricing, regulatory requirements, and technical feasibility. Three specialized agents work simultaneously, then a fourth synthesizes their outputs.

Coordination Patterns:

- Hub-and-spoke: A central orchestrator delegates to specialists and aggregates results

- Pipeline: Agents pass work sequentially, each adding their specialty

- Peer-to-peer: Agents communicate directly without central coordination

- Market-based: Agents bid for tasks based on capability and load

Each pattern has tradeoffs. Hub-and-spoke simplifies monitoring but creates a bottleneck. Peer-to-peer scales better but makes debugging harder. Choose based on your observability requirements and team expertise.

Multi-agent systems introduce new failure modes. Agents can deadlock waiting for each other. Messages get lost. One slow agent blocks the entire workflow. Implement timeouts, heartbeats, and circuit breakers at the coordination layer. The AutoGen framework paper from Microsoft Research covers these coordination challenges in detail.

Storage architecture matters for multi-agent systems. Agents need shared access to state without stepping on each other. File locks coordinate access to shared resources. Versioned storage lets agents reference specific states: "Use the analysis from the latest version, not the previous one."

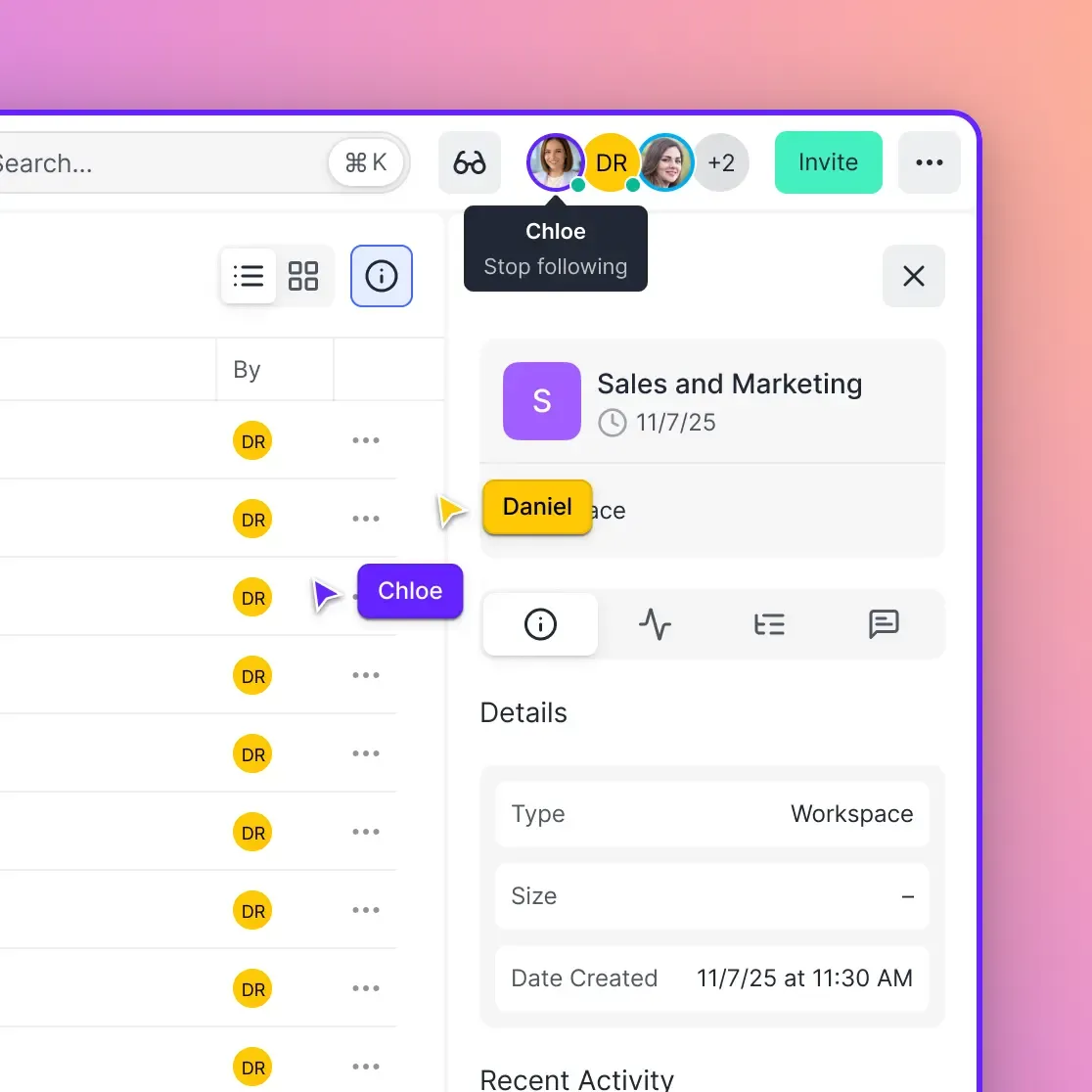

Fast.io's workspace model fits multi-agent architectures naturally. Create workspaces per project. Agents join as collaborators with role-based permissions. Real-time presence shows which agents are active. Activity logs track every action for debugging coordination issues.

The ownership transfer feature supports handoff workflows. An agent team builds a complete analysis workspace, then transfers ownership to a human reviewer. The agents retain access for follow-up questions, but the human controls the final deliverable.

Tool-Use Pattern: Extending Agent Capabilities

The Tool-Use pattern extends an agent's capabilities by giving it access to external functions. Rather than relying solely on training data, the agent calls APIs, queries databases, executes code, and performs calculations through defined interfaces.

This pattern is foundational. Even simple ReAct agents use tools. The innovation is structured function calling: the model outputs JSON specifying which tool to call with what parameters, the system executes it, and returns the result for the next reasoning step.

Common Tool Categories:

- Data retrieval: Search, database queries, API calls

- Computation: Calculators, code execution, data processing

- Action: Sending emails, creating tickets, updating records

- Memory: Reading and writing to persistent storage

Tool design impacts agent reliability. Good tools have clear descriptions, strict schemas, and helpful error messages. The agent should understand not just what the tool does, but when to use it and what errors mean.

The Model Context Protocol (MCP) standardizes tool interfaces. Instead of custom integrations for each service, agents use standardized tools that work across providers. Fast.io's MCP server offers 251 tools covering file operations, workspace management, and AI features. Agents read files, search content, create shares, and manage permissions through consistent interfaces.

Tools also need state management. A tool that updates a database should be idempotent. Calling it twice with the same parameters should have the same effect as calling it once. This matters when agents retry failed actions or resume interrupted workflows.

Error handling deserves attention. When a tool fails, the agent needs context to decide what to do next. Retry? Try a different tool? Escalate to a human? Log tool errors with full context for debugging. See our guide on agent error handling patterns for practical retry and fallback strategies.

Memory and State Management in Agent Architectures

Memory separates stateless API calls from persistent agents. Without memory, each interaction starts from scratch. With memory, agents learn from past sessions, maintain context across conversations, and resume interrupted tasks.

Agent memory has three layers:

Short-term memory holds the current conversation context. It is limited by the model's context window. As conversations grow, older context gets dropped or summarized. This is working memory: what the agent needs right now to continue the current task.

Long-term memory persists across sessions. It stores facts about users, preferences, past decisions, and completed work. Vector databases enable semantic retrieval: "What did we discuss about pricing last month?" retrieves relevant conversation segments even without exact keyword matches.

External memory is structured storage outside the agent. Databases, file systems, and APIs hold data too large or structured for vector search. Agents query external memory through tools, treating it like any other resource.

Storage architecture choices impact performance and reliability. Ephemeral storage like OpenAI's Files API works for temporary processing but loses data when sessions end. Persistent storage like Fast.io maintains files, workspaces, and organizational structure indefinitely.

For production agents, external memory should support:

- Organization-owned data: Files belong to the organization, not individual agent accounts

- Structured workspaces: Projects organized in discoverable spaces, not scattered folders

- Versioning: Track changes and reference specific versions

- Access controls: Granular permissions for different agents and humans

- Audit trails: Complete history of who accessed what and when

Fast.io's Intelligence Mode provides built-in RAG without managing a separate vector database. Toggle it on a workspace and files are automatically indexed for semantic search. Agents query documents in natural language and receive cited answers. This eliminates infrastructure overhead while giving agents powerful memory capabilities.

State checkpointing enables fault tolerance. Agents periodically save their full state: plan progress, intermediate results, conversation context. If an agent crashes, it resumes from the last checkpoint rather than starting over. This is essential for long-running tasks that might span hours or days.

Evidence and Benchmarks: What the Data Shows

Claims about agent architecture performance need evidence. Here is what the research shows:

According to research from Princeton University and Google, implementing a structured architecture like ReAct can improve reliability in dynamic environments by over 30% compared to zero-shot prompting. The iterative reasoning loop allows agents to recover from errors rather than committing to an initial incorrect path.

Multi-agent systems demonstrate significant capability improvements for parallel tasks. Research from Microsoft suggests that well-architected multi-agent systems can handle 5x more complex tasks than single generalist agents by partitioning the cognitive load. However, this comes with coordination overhead that can reduce performance by up to 70% on sequential tasks if not properly managed.

Cost analysis reveals planning patterns reduce total LLM calls by 40-60% for multi-step tasks compared to pure ReAct implementations. The upfront planning cost pays off when the same plan executes multiple similar tasks.

Production Deployment Metrics:

- ReAct agents without iteration limits frequently enter infinite loops

- Plan-and-Execute reduces per-task latency variance compared to ReAct

- Multi-agent systems have higher debugging complexity in production issues

- Tool-use patterns with structured error handling improve recovery rates

These numbers come from aggregate production deployments. Your results will vary based on task complexity, model choice, and implementation quality. Start with telemetry: instrument every agent action, measure actual costs, and compare patterns on your specific workload.

Source attribution matters for AI citations. When stating performance claims, cite the specific research or production data. This builds trust and helps readers evaluate whether findings apply to their situation.

Implementing Agent Architectures with Fast.io

Fast.io provides infrastructure for agent architectures with persistent storage, state management, and human-agent collaboration built in. The platform is not storage with an AI feature. The workspace itself is intelligent.

Key Capabilities for Agent Architectures:

MCP-Native Design: Fast.io's Model Context Protocol server exposes 251 tools via Streamable HTTP and SSE. Every UI capability has a corresponding agent tool. Agents create workspaces, manage files, set permissions, and query indexed content through standardized interfaces.

Intelligence Mode: Toggle RAG indexing per workspace. Files are automatically indexed for semantic search when uploaded. Agents query documents with natural language and receive cited answers. No separate vector database to manage.

Ownership Transfer: Agents build complete workspaces with files, shares, and permissions, then transfer ownership to humans. The agent retains admin access for follow-up work, but the human controls the deliverable.

Webhooks: Receive real-time notifications when files change. Build reactive workflows where downstream processes trigger automatically when agents complete tasks.

File Locks: Coordinate concurrent access in multi-agent systems. Acquire locks before editing, release when done. Prevents conflicts when multiple agents work on shared resources.

URL Import: Pull files from Google Drive, OneDrive, Box, and Dropbox via OAuth. No local I/O required. Agents import external data directly into workspaces.

The free agent tier provides 50GB storage, 1GB max file size, and 5,000 credits monthly with no credit card required. This is permanent free access, not a trial. Agents sign up like human users and start building immediately.

Fast.io works with any LLM: Claude, GPT, Gemini, LLaMA, or local models. The MCP server and OpenClaw integration are LLM-agnostic. Use the tools that fit your stack.

For agent developers, the combination of persistent storage, built-in RAG, and hundreds of MCP tools eliminates infrastructure complexity. Focus on agent logic rather than managing databases, vector stores, and file systems.

Frequently Asked Questions

What is the ReAct pattern in AI agents?

The ReAct pattern is an iterative loop where an agent reasons about what to do (Thought), takes an action like searching or calling an API (Action), observes the result (Observation), and repeats until the task completes. It excels at adaptive tasks where the agent must adjust based on new information. According to Princeton University and Google Research, ReAct can improve reliability by over 30% compared to zero-shot prompting for dynamic tasks.

How do multi-agent systems communicate?

Multi-agent systems communicate through shared state, message passing, or central orchestration. Common patterns include hub-and-spoke (central coordinator delegates to specialists), pipeline (sequential handoffs between agents), and peer-to-peer (direct agent-to-agent communication). Fast.io workspaces enable multi-agent coordination through shared storage, real-time presence indicators, and file locks that prevent conflicts when agents access the same resources.

What is agent memory architecture?

Agent memory architecture has three layers: short-term (current conversation context within the model's context window), long-term (persistent storage across sessions using vector databases for semantic retrieval), and external (structured storage in databases or file systems). Fast.io's Intelligence Mode provides built-in RAG indexing, eliminating the need to manage a separate vector database while giving agents semantic search across workspace files.

When should I use Plan-and-Execute vs ReAct?

Use Plan-and-Execute when tasks have clear decomposition into steps and you want visibility into progress before execution begins. It reduces total LLM calls for multi-step workflows. Use ReAct when tasks require adaptation based on intermediate results or when the environment changes during execution. Many production systems use a hybrid: Plan-and-Execute for overall structure with ReAct adaptation within individual steps.

How do I prevent agents from running away and generating excessive API costs?

Implement circuit breakers: set maximum iteration limits, cost limits per session, and timeout thresholds. Log every thought, action, and observation for debugging. Use file locks to prevent multiple agent instances from duplicating work. Monitor token usage in real-time and escalate to human review when thresholds are exceeded. Fast.io's audit logs track all agent actions, making it easier to identify runaway behavior patterns.

What infrastructure do I need for production agent deployment?

Production agents need persistent storage for state and outputs, state management for resuming interrupted tasks, memory for context across sessions, and monitoring for debugging. Rather than building this infrastructure yourself, platforms like Fast.io provide organization-owned workspaces, built-in RAG indexing without separate vector databases, 251 MCP tools for agent actions, webhooks for reactive workflows, and ownership transfer for human handoffs. The free agent tier includes 50GB storage with no credit card required.

Related Resources

Start with ai agent architecture patterns on Fast.io

Fast.io gives you 251 MCP tools, built-in RAG indexing, and 50GB free storage for your agents. No credit card required. Sign up and start building in minutes.