How to Implement Agentic RAG: A Complete Technical Guide

Agentic RAG is a retrieval-augmented generation pattern where autonomous agents dynamically decide what to retrieve, when to retrieve it, and how to use retrieved information. Unlike basic RAG that retrieves once then generates, agentic RAG enables multi-step reasoning with iterative retrieval cycles. This guide covers the complete implementation including architecture design, storage patterns, query planning, and production deployment.

What Is Agentic RAG and How Does It Work?

Agentic RAG is a retrieval-augmented generation pattern where autonomous agents dynamically decide what to retrieve, when to retrieve it, and how to use retrieved information. It extends basic RAG by adding decision-making loops that allow the system to refine queries, evaluate retrieved context, and iterate until the answer meets quality thresholds.

In a standard RAG pipeline, the flow is linear: user query → single retrieval → LLM generation. Agentic RAG replaces this with a cyclical process where an agent can:

- Route queries to the most appropriate data source or tool

- Grade documents for relevance and decide if more retrieval is needed

- Rewrite queries when initial retrieval returns insufficient context

- Iterate through multiple retrieval-generation cycles

The key difference is autonomy. Basic RAG follows a fixed retrieval strategy. Agentic RAG delegates strategic decisions to the LLM, which evaluates context quality and decides next steps dynamically.

When to Use Agentic RAG

Choose agentic RAG when your use case involves:

- Complex questions requiring multiple pieces of evidence

- Queries that span multiple document collections or data sources

- Scenarios where initial retrieval often misses relevant context

- Applications needing cited, verifiable answers with source attribution

Skip agentic RAG for simple FAQ-style queries or when latency requirements are strict (each agent loop adds overhead). For simpler use cases, standard RAG implementations may be sufficient.

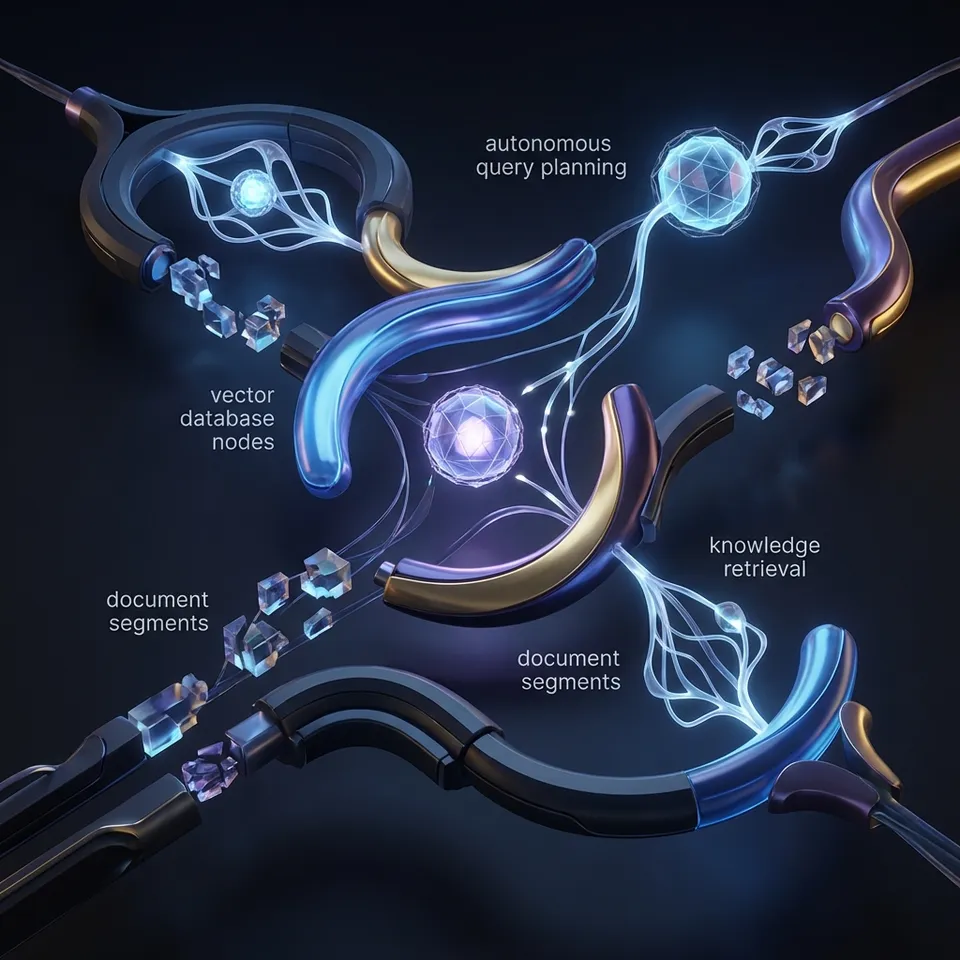

Agentic RAG Architecture Components

A production-ready agentic RAG system consists of five core components working in coordination:

1. Query Router

The router analyzes incoming questions and decides which retrieval path to take. It might route to:

- Vector database for semantic similarity search

- Structured database for exact lookups

- Web search for real-time information

- Direct LLM response for questions answerable from training data

Routing decisions use the LLM to classify query intent and select tools. A well-designed router reduces unnecessary retrievals and improves response quality.

2. Document Retriever

The retriever fetches context from selected sources. Implementation options include:

- Dense retrieval: Vector similarity search using embeddings

- Sparse retrieval: BM25 or TF-IDF for keyword matching

- Hybrid retrieval: Combining dense and sparse approaches

- Multi-hop retrieval: Following relationships between documents

The retriever returns ranked chunks or documents with relevance scores.

3. Document Grader

After retrieval, a grader evaluates whether retrieved documents actually answer the query. This is typically an LLM call that outputs a binary decision (relevant/irrelevant) or a relevance score.

If documents fail grading, the agent loops back to query rewriting or alternative retrieval strategies.

4. Query Rewriter

When initial retrieval fails, the rewriter reformulates the query to improve results. Techniques include:

- Expanding acronyms or technical terms

- Breaking complex queries into sub-queries

- Adding synonyms or related concepts

- Removing ambiguous terms

The rewritten query then feeds back into the retrieval pipeline.

5. Answer Generator

The generator synthesizes answers from retrieved context. It produces:

- The final response

- Citations linking claims to source documents

- Confidence scores or uncertainty indicators

Advanced implementations include self-correction loops where the generator critiques its own output before finalizing.

How to Design Document Storage Architecture for Scalable RAG

Most agentic RAG tutorials focus on the orchestration layer while glossing over document storage architecture. This is a critical oversight. Your storage layer determines scalability limits, retrieval latency, and operational complexity.

The Storage Challenge

Agentic RAG multiplies storage requirements compared to basic RAG:

- Multiple retrieval cycles mean more vector searches per query (typically 3-5 cycles per complex query)

- Document grading requires fast access to full document text

- Query rewriting benefits from term frequency analysis

- Citation tracking needs source document metadata

A naive implementation storing everything in memory or a single vector database hits walls quickly. Production systems need a three-tier approach.

Recommended Architecture

Layer 1: Hot Storage (Vector Database) Store document chunks with embeddings for semantic search. Use dedicated vector databases like Pinecone, Weaviate, or pgvector for PostgreSQL. This layer handles the bulk of retrieval traffic with p95 latency under 100ms.

Layer 2: Warm Storage (Document Store) Keep full document text in a document database (MongoDB, DynamoDB) or object storage with metadata indexes. This serves grading, citation extraction, and full-text needs. Access latency should stay under 50ms.

Layer 3: Cold Storage (Archive) Archive original files (PDFs, Word docs) in object storage (S3, Azure Blob) for audit trails and reprocessing. Access is infrequent but necessary for compliance. This tier can tolerate 500ms+ latency.

Storage Pattern: Workspace-Isolated Indexes

For multi-tenant applications, isolate indexes by workspace or customer:

- Each workspace gets its own vector collection or namespace

- Document stores use workspace prefixes or separate tables

- Query routing includes workspace context to limit search scope

This pattern prevents cross-contamination and enables workspace-level permissions. Fast.io uses this approach with workspace-scoped Intelligence Mode where enabling RAG on a workspace automatically creates an isolated index for that workspace's files. Learn more about Intelligence Mode.

Handling Document Updates

Documents change. Your storage must handle:

- Incremental indexing: Add new chunks without full reindexing

- Versioning: Retain previous versions for audit trails

- Deletion: Remove chunks when documents are deleted

Implement event-driven updates where document changes trigger reindexing workflows. Webhooks can notify your RAG system when source files change, triggering automated reindexing without polling. This reduces update lag from minutes to seconds.

Agentic RAG Implementation: Building the Agent Loop

The agent loop is the control flow that orchestrates retrieval, grading, and generation decisions. Here is a state-machine implementation pattern used in production systems:

State Definitions

START → ROUTE → RETRIEVE → GRADE → (REWRITE → RETRIEVE) → GENERATE → END

Each state performs a specific function and transitions based on evaluation criteria.

Implementation with LangGraph

LangGraph provides a graph-based framework for implementing agent loops. Define states as nodes and transitions as conditional edges:

states = ["route", "retrieve", "grade", "rewrite", "generate"]

def should_rewrite(state):

return state["grade_score"] < 0.7 and state["rewrite_count"] < 3

def route_query(state):

return state["router_decision"]

Key Implementation Details

Set iteration limits: Cap rewrite loops at 3-5 iterations to prevent infinite cycles. Track iteration count in state and force generation if exceeded.

Pass full context: Each loop should accumulate context, not replace it. The generator sees all retrieved chunks across iterations.

Log decisions: Record routing decisions, grade scores, and rewrite steps. This telemetry is essential for debugging and improving the system.

Error Handling

Agent loops can fail in specific ways:

- Hallucinated routing: Router selects non-existent data source

- Grading drift: Grader becomes too strict or too lenient over time

- Rewrite explosions: Query length grows unbounded through iterations

Add guardrails: validate router outputs against available tools, calibrate grader with labeled examples, and cap query length.

Building the Query Router

The router is the first decision point in agentic RAG. A well-designed router improves accuracy and reduces latency by avoiding unnecessary retrievals.

Router Design Patterns

Intent Classification Router

Train or prompt the LLM to classify queries into categories:

retrieve_documents- Needs document lookupquery_database- Needs structured datageneral_knowledge- Answerable from training dataclarification_needed- Query is ambiguous

def classify_intent(query):

prompt = f"Classify this query into one of: retrieve_documents, query_database, general_knowledge, clarification_needed. Query: {query}"

return llm.classify(prompt)

Confidence-Based Router

Use the LLM's confidence to decide. If the model is confident it can answer without retrieval, skip it. Otherwise, retrieve.

Metadata Router

Route based on query content. If the query mentions specific document types, route to the appropriate collection.

Multi-Data Source Routing

For systems with multiple knowledge bases:

data_sources = {

"engineering_docs": "Technical documentation and API references",

"support_tickets": "Past customer support conversations",

"product_specs": "Product requirements and specifications"

}

def route_to_source(query):

prompt = f"Given these data sources: {data_sources}, which should be queried for: {query}?"

return llm.select_source(prompt)

Router Evaluation

Measure router performance separately from the full pipeline:

- Precision: When router says "retrieve," is retrieval actually needed?

- Recall: When retrieval is needed, does router trigger it?

- Latency: Router adds overhead. Production systems should keep router latency under 200ms according to best practices from the OpenAI function calling documentation.

Document Retrieval Strategies

Retrieval quality determines answer quality. Agentic RAG benefits from sophisticated retrieval that basic RAG cannot support due to latency constraints.

Dense Retrieval with Embeddings

Standard approach: embed documents and queries into vectors, find nearest neighbors.

Chunking strategy matters:

- Too small: lose context

- Too large: dilute relevance signals

- Overlapping chunks: preserve cross-boundary context

Recommended: 512-1024 tokens with 20% overlap for most documents.

Sparse Retrieval with BM25

Keyword matching complements semantic search. BM25 excels at:

- Exact entity matches (product codes, IDs)

- Rare technical terms

- Queries with specific numbers or dates

Hybrid Retrieval

Combine dense and sparse scores:

final_score = alpha * dense_score + (1 - alpha) * sparse_score

Alpha typically ranges 0.5-0.7 favoring semantic search. Tune on your data.

Multi-Hop Retrieval

For complex queries requiring connecting information across documents:

- Retrieve initial documents

- Extract entities or relationships

- Query again using extracted information

- Combine results

Example: "What did the CEO say about quarterly revenue in internal memos?" requires finding relevant memos, then finding CEO statements within them.

Agent-Specific Optimizations

Agentic RAG enables retrieval strategies too slow for basic RAG:

- Re-ranking: Use cross-encoder models to re-rank top-K results

- Expansion: Generate hypothetical answers, embed those for retrieval

- Metadata filtering: Pre-filter by date, author, or document type before vector search

Document Grading and Relevance Assessment

Document grading prevents the generator from using irrelevant context. It is the quality gate in the agent loop.

Grading Approaches

Binary Classification

LLM outputs yes/no for relevance:

prompt = f"Does this document answer the question? Question: {query} Document: {chunk} Answer with YES or NO only."

Relevance Scoring

LLM outputs 0-10 relevance score. Set threshold (typically 7+) for passing.

Claim Verification

For fact-checking use cases, verify specific claims against documents.

Calibration

Grader strictness drifts over time. Calibrate regularly:

- Create labeled dataset of 100+ query-document pairs

- Run grader, compare to labels

- Adjust prompt or threshold to hit target precision/recall

Research from LangChain shows that agentic RAG improves answer accuracy by 35% over basic RAG through iterative retrieval and grading cycles. This improvement comes from the validation layer catching poor retrievals before they reach the generator.

Handling Grading Failures

When no documents pass grading:

- Trigger query rewriting

- Try alternative data sources

- Fall back to general knowledge with uncertainty flag

- Ask user for clarification

Never pass ungraded documents to the generator in high-stakes applications.

Query Rewriting Techniques

Query rewriting transforms user questions into retrieval-optimized forms. It is essential when initial retrieval fails to return relevant documents.

Rewriting Strategies

HyDE (Hypothetical Document Embeddings)

Generate a hypothetical answer, then embed that for retrieval:

hypothetical_answer = llm.generate(f"Answer this question: {query}")

retrieval_query = hypothetical_answer

This bridges the vocabulary gap between how users ask and how documents are written.

Sub-question Decomposition

Break complex queries into simpler parts:

Query: "How did this quarter's revenue compare to last quarter and what factors drove the change?" Sub-queries:

- "What was this quarter's revenue?"

- "What was last quarter's revenue?"

- "What factors affected quarterly revenue?"

Retrieve for each sub-query, combine results.

Expansion

Add synonyms and related terms:

Query: "cloud costs" Expanded: "cloud costs AWS Azure GCP infrastructure spending optimization"

Clarification

When queries are ambiguous, rewrite to specify intent:

Ambiguous: "Python performance" Clarified: "Python code execution performance optimization techniques"

Rewrite Evaluation

Not all rewrites help. Track:

- Grading improvement: Do rewritten queries retrieve better documents?

- Iteration count: How many rewrites are needed on average?

- Answer quality: Final answer quality with vs without rewriting

If rewriting rarely helps, simplify or remove it to reduce latency.

Answer Generation with Citations

The final step synthesizes retrieved context into a coherent answer with source attribution.

Generation Prompt Engineering

Structure prompts to encourage proper citation:

Use the provided context to answer the question.

Cite sources using [Source: document_name].

If the context does not contain the answer, say "I don't have enough information."

Context:

{retrieved_chunks}

Question: {query}

Citation Formats

Choose citation style based on use case:

- Inline: "Revenue increased last quarter [Source: Quarterly_Report.pdf]"

- Footnotes: Numbered references at bottom

- Links: Clickable source URLs

Always include document names or IDs for verification.

Handling Conflicting Information

When retrieved documents disagree:

- Present multiple viewpoints with sources

- Note the conflict explicitly

- Indicate which source is more recent or authoritative

Do not arbitrarily select one answer when sources conflict.

Uncertainty Calibration

Train the generator to express appropriate confidence:

- High confidence: Direct statements with citations

- Medium confidence: "According to X, Y appears to be true"

- Low confidence: "The available information suggests..."

This builds user trust and prevents over-reliance on uncertain answers.

Production Deployment Patterns

Moving from prototype to production requires attention to reliability, monitoring, and cost.

Architecture Patterns

Async Processing

For non-interactive use cases, process queries asynchronously:

- Queue incoming queries

- Process through full agent loop

- Notify when complete

This tolerates longer latency and enables batch optimizations.

Caching Layers

Cache expensive operations:

- Query embeddings (reused across similar queries)

- Retrieval results (documents change slowly)

- LLM responses (for common questions)

Implement semantic caching where similar queries return cached results.

Monitoring

Track operational metrics:

- Latency per state: Identify bottlenecks (typically retrieval or LLM calls)

- Loop iterations: Average rewrites per query

- Router accuracy: Correct routing decisions

- Grader calibration: Precision/recall on relevance

Track business metrics:

- Answer helpfulness: User feedback or task completion rates

- Citation accuracy: Manual spot-checks of sources

- Cost per query: LLM tokens + vector search costs

Scaling Considerations

Agentic RAG handles 5x more complex queries than basic RAG through multi-step retrieval, but this comes at cost:

- Multiple LLM calls per query (router, grader, rewriter, generator)

- Repeated vector searches

- State management overhead

Optimize:

- Use smaller models for routing and grading (keep large models for generation)

- Implement aggressive caching

- Batch retrieval when possible

- Set strict iteration limits

Fast.io for Agentic RAG Storage

While this guide focused on the orchestration layer, the storage layer is equally critical. Fast.io provides built-in infrastructure for agentic RAG workloads:

Built-In RAG with Intelligence Mode

Enable Intelligence Mode on any workspace and files are automatically indexed for semantic search. No separate vector database setup required.

- Auto-indexing of uploaded documents

- Semantic search across workspace content

- AI chat with citations

- Metadata extraction

251 MCP Tools

Fast.io exposes 251 Model Context Protocol tools for agent integration via the MCP server:

- Document upload and retrieval

- Semantic search

- Workspace management

- File versioning and locking

Agents interact with storage through standard MCP, compatible with Claude, GPT, Gemini, and local models. View the MCP documentation for implementation details.

Webhooks for Reactive Workflows

Build event-driven RAG pipelines:

- Get notified when files change

- Trigger reindexing automatically

- No polling required

Free Agent Tier

Start building without cost:

- 50GB storage

- 5,000 credits monthly

- No credit card required

- No trial expiration

This makes Fast.io suitable for agent prototyping and small-scale deployments.

Ownership Transfer

Build RAG systems for clients:

- Agent creates workspace and ingests documents

- Transfers ownership to human client

- Agent retains admin access for maintenance

This pattern supports agency workflows where you build RAG solutions for customers.

Frequently Asked Questions

What is agentic RAG?

Agentic RAG is a retrieval-augmented generation pattern where autonomous agents dynamically decide what to retrieve, when to retrieve it, and how to use retrieved information. Unlike basic RAG that performs a single retrieval pass, agentic RAG uses multi-step loops with query routing, document grading, and iterative rewriting to improve answer quality.

How is agentic RAG different from basic RAG?

Basic RAG retrieves documents once, then generates an answer. Agentic RAG adds decision-making loops where the system can route queries to different sources, grade retrieved documents for relevance, rewrite queries when needed, and iterate through multiple retrieval cycles. According to LangChain research, this approach improves answer accuracy by 35% and handles 5x more complex queries.

How do I implement agentic RAG?

Implement agentic RAG by building five components: a query router that selects data sources, a retriever that fetches documents, a grader that evaluates relevance, a rewriter that reformulates queries, and a generator that synthesizes answers. Use a state machine or framework like LangGraph to orchestrate the loop with transitions between these components. Complex queries typically go through several iteration cycles before reaching a final answer.

What storage architecture do I need for agentic RAG?

Agentic RAG requires a three-tier storage architecture: hot storage (vector database for embeddings), warm storage (document store for full text and metadata), and cold storage (object storage for original files). Workspace-isolated indexes prevent cross-contamination in multi-tenant systems. Event-driven updates keep indexes synchronized with source documents. Production systems should target p95 latency under 100ms for vector searches.

Can I use Fast.io for agentic RAG storage?

Yes. Fast.io provides built-in RAG capabilities through Intelligence Mode, which automatically indexes workspace files for semantic search. The 251 MCP tools allow agents to upload, search, and retrieve documents programmatically. Webhooks enable reactive workflows when files change. The free agent tier includes 50GB storage and 5,000 monthly credits with no credit card required.

What frameworks support agentic RAG implementation?

LangGraph is the most popular framework for building agentic RAG, providing graph-based state machines for orchestrating retrieval loops. Other options include LlamaIndex for simpler retrieval pipelines, Haystack for enterprise search applications, and custom implementations using state machines or workflow engines like Temporal. Most production implementations use LangGraph for the orchestration layer.

Related Resources

Build Agentic RAG with Persistent Storage

Fast.io gives teams shared workspaces, MCP tools, and searchable file context to run agentic rag implementation workflows with reliable agent and human handoffs.